Estudios e investigaciones

Impact of generative AI on university students’ digital competences: experimental evidence based on the DigComp framework

Impacto de la IA generativa en competencias digitales universitarias: evidencia experimental basada en el marco DigComp

Impact of generative AI on university students’ digital competences: experimental evidence based on the DigComp framework

RIED-Revista Iberoamericana de Educación a Distancia, vol. 29, núm. 1, pp. 53-77, 2026

Asociación Iberoamericana de Educación Superior a Distancia

Esta obra está bajo una Licencia Creative Commons Atribución-NoComercial 4.0 Internacional.

How to cite: García, C. G., & Pallarés, N. (2026). Impact of generative

AI on university students’ digital competences: experimental evidence based on the DigComp framework

[Impacto de la IA generativa en competencias digitales universitarias: evidencia experimental basada en

el marco DigComp]. RIED-Revista Iberoamericana de Educación a Distancia,

29(1), 53-77. https://doi.org/10.5944/ried.45533

Abstract: This study analyzes the impact of the formative use of generative artificial intelligence (AI) on the development of digital competencies in university students. The intervention was implemented through a randomized controlled trial research design. The experimental group received training aimed at strategically using generative AI models to complete academic tasks, while the control group carried out the same activities without specific AI guidance. The impact was assessed using a difference-in-differences model with fixed effects, based on pre- and post-intervention questionnaires. Competences were analyzed according to the European DigComp 2.2 framework, covering four main competence areas: information and data literacy, communication and collaboration, safety, and problem solving. The results show statistically significant improvements in information and data literacy and in problem solving, both in their functional and metacognitive dimensions. Differential effects were also identified depending on the initial level of digital competence, with more pronounced gains among students with lower prior proficiency, who showed significant progress across all evaluated competencies. These findings suggest a compensatory effect of the didactic use of AI, capable of reducing gaps and promoting more equitable and inclusive learning processes. The study supports the guided integration of emerging technologies in higher education to strengthen digital competencies.

Keywords: artificial intelligence, digital competences, higher education, autonomous learning, DigComp.

Resumen: Este estudio analiza el impacto del uso formativo de inteligencia artificial (IA) generativa en el desarrollo de competencias digitales en estudiantes universitarios. La intervención se implementó mediante un ensayo controlado aleatorizado. El grupo experimental recibió formación orientada a utilizar estratégicamente modelos de IA generativa para la realización de tareas, mientras que el grupo de control completó las mismas actividades sin orientación específica sobre IA. El impacto se evaluó mediante un modelo de diferencias en diferencias con efectos fijos, basado en cuestionarios pre y postintervención. Las competencias se analizaron según el marco europeo DigComp 2.2, contemplándose cuatro áreas: alfabetización en información y datos, comunicación y colaboración, seguridad y resolución de problemas. Los resultados muestran mejoras estadísticamente significativas en alfabetización en información y datos y en resolución de problemas, tanto en su dimensión funcional como metacognitiva. Asimismo, se identificaron efectos diferenciales según el nivel inicial de competencia digital, siendo más pronunciados entre estudiantes con menor dominio previo, quienes presentan avances significativos en todas las competencias evaluadas. Estos hallazgos sugieren un efecto compensatorio del uso didáctico de la IA, capaz de reducir brechas y promover aprendizajes más equitativos. El estudio respalda la integración guiada de tecnologías emergentes en la educación superior para fortalecer las competencias digitales.

Palabras clave: inteligencia artificial, competencias digitales, educación superior, aprendizaje autónomo, DigComp.

INTRODUCTION

The emergence of generative artificial intelligence (AI) tools has brought about a significant transformation in teaching and learning processes in higher education (Hwang et al., 2020; Lee et al., 2024). These tools have reshaped the ways in which students access, analyze, and evaluate information, potentially influencing the improvement of their digital competences. In this context, and given the growing adoption of these technologies, it is essential to analyze their effects, particularly on digital competences, a key element for meeting the demands of a constantly evolving labor market (Van Laar et al., 2020).

These competences encompass the set of knowledge and skills required to effectively use digital technologies (Ferrari & Punie, 2013; Laupichler et al., 2022). Currently, they include not only information search and management, but also the ability to critically understand and evaluate digital sources (Polizzi, 2020). Previous studies have highlighted their importance in various domains such as education, employment, and social relations (Bastian et al., 2023; Buchholz et al., 2020; Morgan et al., 2022). Moreover, the European Commission defines them as one of the eight key competences for lifelong learning, emphasizing their role in the safe, critical, and responsible use of digital technologies for learning, work, and active participation in society (European Commission, 2019). Therefore, promoting their development has become a priority in educational and policy agendas.

Recent research has shown that the incorporation of technologies for educational purposes fosters the development of digital competences, as well as self-regulation and a positive perception of learning among students (Blau et al., 2020; Lin et al., 2025). Furthermore, when these technologies are integrated intentionally and with a clear orientation, they not only strengthen such competences but can also contribute to autonomous learning (Ting, 2015). Along the same lines, Prior et al. (2016) show that a higher level of digital competences, together with positive attitudes toward technology, is associated with higher levels of self-efficacy, which positively influences autonomous learning and participation.

The use of generative AI tools in higher education has been widely investigated, with studies highlighting both their benefits and associated risks (Dimitriadou & Lanitis, 2023; Zhai et al., 2021). García Peñalvo et al. (2024) emphasize that, although generative AI tools offer important benefits, such as personalized learning, the automation of repetitive tasks, the generation of educational content, and support for the development of critical thinking, they also present significant limitations. Likewise, challenges have been identified related to equity of access, the protection of personal data, and the need to train both educators and students in the ethical and critical use of these technologies. Popenici and Kerr (2017) point out that the incorporation of artificial intelligence-based technologies is profoundly transforming teaching and learning processes in universities, which poses new challenges for institutions and requires a reconsideration of traditional approaches.

Despite the growing interest, few studies directly analyze how the formative use of generative AI contributes to the development of digital competences. A systematic review conducted by Zhao et al. (2021), which analyzed 33 articles published between 2015 and 2021, reveals that only about 15% of the published works examine factors that may influence their acquisition. In contrast, recent research has focused on the inverse relationship: how a higher level of digital competence favors the adoption of these technologies. Specifically, Moravec et al. (2024) show that a higher level of digital competences is associated with more frequent use of ChatGPT for testing, entertainment, and the acquisition of new knowledge. Furthermore, recent studies have found that greater digital literacy is associated with more favorable attitudes toward the use of AI-powered technologies in educational contexts (Saklaki & Gardikiotis, 2024), reinforcing the idea of a dynamic interaction between competences and technological disposition. Although some of the recent works provide valuable insights into the skills required in AI-mediated environments, there remains a shortage of research that systematically and structurally evaluates the acquisition of specific digital competences among students in higher education, using standardized frameworks such as DigComp.

This study makes three main contributions. First, it offers, to the best of our knowledge, the first empirical analysis of the impact of the didactic integration of generative AI on the development of specific digital competences within the DigComp framework, based on a quasi-experimental design. Second, it quantifies the magnitude of this impact, identifying significant progress in fundamental aspects such as digital self-regulation and interaction with technological tools. Third, by analyzing heterogeneous effects, it reveals a potential leveling effect: the greatest progress is observed among students with lower initial levels, suggesting that, when applied with didactic intentionality, generative AI can contribute to greater equity in the development of digital competences.

The results show that an intentional didactic implementation of generative AI can not only enhance learning but also reduce pre-existing inequalities. Its strategic use contributes to building more equitable and inclusive environments, strengthening key competences to operate critically and autonomously in increasingly complex digital contexts.

The remainder of the article is structured into five sections. First, the relevant literature on digital competences and the use of generative AI in educational contexts is reviewed. Next, the methodology employed is described, comprising the experimental design and the estimation strategy, based on a difference-in-differences model with fixed effects. Then, the data used are described and a descriptive analysis is provided. In the following section, the main results are presented and discussed, considering both average effects and heterogeneous effects. Finally, the article concludes with a reflection on the implications derived from the findings.

LITERATURE REVIEW

The development of digital competences has become a necessity to face the challenges and benefit from the opportunities offered by an increasingly digitalized environment. In the educational field, it is essential for students to acquire these competences, since the ability to effectively use technologies and access information constitutes a key factor for their academic success, as well as for their professional and personal performance (OECD, 2023).

This need becomes particularly relevant in higher education, where students are commonly referred to as “digital natives” (Prensky, 2001), under the premise that, having grown up in digital environments, they possess innate skills in the use of technological tools. However, belonging to this generation does not guarantee competent, critical, or learning-oriented use, as various studies have pointed out (Gallardo-Echenique et al., 2015; Li & Ranieri, 2010; Ng, 2012; Selwyn, 2009). This highlights the importance of educating students in digital competences that enable them to actively adapt to a constantly evolving technological environment and to develop an open attitude towards innovation (Barak, 2018). Therefore, educators must actively promote the strengthening of these competences in order to foster student learning and take advantage of the educational potential offered by digital technologies.

In this context, the concept of digital competence has been widely addressed in the specialized literature, giving rise to a diversity of approaches and definitions. Among the most widely accepted definitions of digital competences today is the one that conceives them as an interrelated set of essential skills for successfully operating in digital environments (List, 2019). This notion is present in the European Digital Competence Framework for Citizens (DigComp), initially developed by Ferrari and Punie (2013). This framework defines digital competences as a set of knowledge, skills, and attitudes that enable citizens to effectively use digital technologies for work, learning, and social participation. The model identifies five key areas: information and data literacy, communication and collaboration, digital content creation, safety, and problem-solving, with the most recent version being DigComp 2.2 (Vuorikari et al., 2022).

Although numerous studies have adopted the DigComp framework as a reference for evaluating digital competences in higher education, the majority have concentrated on assessing students’ levels of proficiency. In contrast, relatively few studies analyze the impact of didactic interventions on the effective development of these competences. Among the most relevant contributions in this line is the study by Gutiérrez Porlán and Serrano Sánchez (2016), which demonstrates how a didactic intervention mediated by digital technologies can significantly enhance competences across the five DigComp areas. These findings reinforce the importance of integrating intentional and sustained strategies to promote critical and functional digital literacy.

Despite the growing interest and the rapid adoption of generative AI tools, the empirical literature examining their impact on the improvement of university students’ digital competences remains limited. Kasneci et al. (2023) highlight the importance of complementing the use of tools such as ChatGPT with didactic strategies that enhance the critical verification of information, encourage the use of reliable complementary sources, and integrate activities aimed at advancing students’ cognitive development. Moreover, the design of effective prompts, the contextualized interpretation of AI responses, and reflection on its use are key elements for achieving meaningful interaction with these technologies (Lee & Palmer, 2025). Promoting these practices in the classroom can contribute to strengthening digital competences. Benvenuti et al. (2023) argue that artificial intelligence represents a valuable resource for educators in promoting skills such as creativity, critical thinking, and problem-solving. For their part, Arseven and Bal (2025) emphasize that this potential lies in its capacity to facilitate metacognitive processes that are fundamental for the development of these competences; however, both point out that educational experiences integrating AI for these purposes remain scarce in the literature. Consequently, it is necessary to analyze the extent to which a didactic and structured integration of these technologies contributes to the strengthening of such competences among students.

A first contribution from this perspective is the work of Dalgıç et al. (2024), which demonstrates that the integration of ChatGPT into education has a positive impact both on learning outcomes and on the development of students’ digital competences. The study points out that digital literacy is an essential mediating variable and that, in order to fully benefit from the advantages of artificial intelligence, students must possess and continuously strengthen their digital competences. It further concludes that the use of ChatGPT directly promotes skills such as information search and analysis, problem-solving, and learning in virtual environments, thus acting not only as a tool that requires digital competences but also as a resource that actively contributes to their development. Therefore, the authors emphasize the importance of educational institutions prioritizing training in digital competences in order to maximize the potential of advanced technologies such as ChatGPT. A second relevant contribution is the study by Naamati-Schneider and Alt (2024), who claim to be the first to empirically evaluate the hypothesis that the use of ChatGPT may render certain digital competences obsolete by taking over tasks previously carried out by students, particularly those related to information access and analysis. Nevertheless, their findings also reveal that the incorporation of this technology into the educational process can enhance the competence of critical evaluation, as it requires students to verify the validity and reliability of AI-generated content.

The analysis of previous studies shows a growing need to promote digital competences in higher education, while also revealing a still limited empirical basis regarding the impact of generative artificial intelligence tools on their development, particularly due to the lack of a unified reference framework that facilitates comparison across studies. Although these technologies show considerable potential to strengthen such competences, their effectiveness largely depends on how they are integrated into the teaching and learning process. In this context, the present research aims to quantify the effect of a structured didactic intervention based on generative AI, with the objective of providing evidence of its contribution to the development of students’ digital competences within the DigComp framework, as well as analyzing its possible heterogeneous effects.

METHODOLOGY

To evaluate the impact of the formative use of generative artificial intelligence (AI) on learning, we implemented a randomized controlled trial (RCT) with students enrolled in the Microeconomics course. The students belonged to the degree programs in Business Administration and in Marketing and Commercial Management at the Catholic University of Murcia. Both programs offered the course in two modalities: face-to-face and online. In addition, in the Business Administration program, the face-to-face modality was available in both Spanish and English. Thus, a total of five groups (classrooms) were formed: three face-to-face and two online.

The assignment of the treatment was carried out at the group (classroom) level, so that each of the five groups was entirely allocated either to the treatment or to the control group. Randomization was stratified by modality (face-to-face or online) to ensure an equitable distribution of the treatment across modalities.1

To maintain a consistent learning environment across all modalities, instructors standardized the teaching materials and methods, and established an evaluation protocol to ensure that all groups received identical course content (materials, resources, and activities), delivered uniformly and evaluated with the same criteria, regardless of the program. All course material was available through CANVAS, the university’s institutional platform.

The intervention consisted of two components: (1) training focused on the strategic application of generative AI models, offered only to the treatment group, and (2) a series of online self-assessments, administered to both groups, composed of multiple-choice questions covering both the theoretical and practical contents of each course unit. The self-assessments included immediate feedback, which was differentiated according to the assigned group.

The training focused on techniques for designing effective prompts and on the use of large language models (LLMs) powered by AI, with the overall objective of improving students’ learning and digital competences. To this end, the following specific objectives were established, aimed at promoting effective interaction with LLMs:

- Contextualize the question – Encourage students to describe the entire problem before formulating specific questions.

- Define key terms and variables – Teach students to make explicit the economic principles, formulas, or assumptions relevant to their queries.

- Break down complex problems – Advise structuring queries into step-by-step segments to obtain more organized responses.

- Use iterative questioning – Emphasize the importance of reformulating, refining, and expanding AI-generated responses to deepen understanding.

- Verify their own solutions – Encourage students to attempt solving the problems themselves before resorting to AI.

These objectives were addressed in a training workshop delivered exclusively to the treatment group during the first day of class. The session focused mainly on the use of ChatGPT, although other tools, such as Copilot and Gemini, were also briefly introduced. The educators provided an introductory explanation, accompanied by practical examples, after which the students interacted with the tools themselves and were able to raise their questions.

In the following weeks, the students were required to complete the online self-assessments at the end of each unit, through the Canvas platform of the Microeconomics course. Each test had a time limit of one hour, and upon completion, students received a numerical grade. A maximum of five attempts per assessment was allowed, with a minimum interval of one day between them. In each attempt, the questions were randomly generated from the item bank. Feedback in the self-assessments was intentionally designed to differ between the treatment groups. In the treatment group, the feedback provided step-by-step guidance, with personalized hints and prompts aligned with the specific objectives taught for effective interaction with LLMs. This formative feedback was designed to guide students toward the correct answer without explicitly providing it, fostering deeper understanding and enhancing their ability to solve problems autonomously. Instead of indicating only whether the answer was correct or incorrect, the feedback was adaptive: students could interact with different components of the feedback and customize their learning experience according to their needs. This allowed them to explore alternative explanations and refine their understanding based on their performance and learning gaps. In contrast, the control group students received only basic feedback indicating whether their answers were correct or incorrect, without explanations, hints, or additional instructional resources. The materials and data to replicate the intervention are available in an open repository.2

To evaluate the impact of the intervention, two questionnaires were administered: one on the first day of class, before the AI training workshop (pre), and another after the last self-assessment (post-intervention). Each questionnaire included two parts: (i) demographic, family, and economic literacy data3, and (ii) an assessment of students’ self-reported digital competences, based on the framework developed by Vuorikari et al. (2022). The first version of this framework, DigComp 1.0, was developed by Ferrari and Punie (2013), with the aim of identifying and defining the digital competences relevant for all citizens living and working in Europe. Currently, the DigComp model has been consolidated as one of the most widely used reference frameworks for the design of instruments measuring digital competences in higher education contexts (Mattar et al., 2022).

Table 1 presents a detailed description of the items answered by students in relation to the digital competences assessed. These were aligned with the European DigComp 2.2 model and included four key areas: information and data literacy, communication and collaboration, safety, and problem-solving. In the latter, two dimensions were evaluated: on the one hand, interaction with technological tools or functional dimension (Comp3), and on the other, digital self-regulation, understood as the ability to reflect metacognitively on one’s own learning in digital environments (Comp4).

Assessed digital competences

| DigComp | Item | ||

| Area 1 | Information and Data Literacy | Comp1 | Identify my information needs and find data and content through a simple search in digital environments, such as Google. |

| Area 2 | Communication and Collaboration | Comp2 | Achieve effective communication using digital tools, such as email, discussion forums, etc. |

| Area 5 | Problem Solving | Comp3 | Provide simple instructions to a computer system to help me solve a basic problem or task, such as databases, spreadsheet software, presentation software, AI tools, etc. |

| Problem Solving | Comp4 | Recognize what I need to improve or update in my digital competences. | |

| Area 4 | Safety | Comp5 | Evaluate the benefits and risks before allowing third parties to process personal data when I use digital environments or AI tools. |

The items, written in self-report format with closed-ended options, began with “I am able to...”, to assess the perceived skill level. They were formulated in a neutral manner, so that the response indicated the degree of proficiency without implying difficulty (Clifford et al., 2020). Their elaboration was based on the definitions and examples of the DigComp 2.2 framework, adapted to the objectives of the study. In addition, only five items were included to measure digital competences, in order to avoid making the questionnaire excessively long, thus reducing the risk of dropout and favoring the quality of responses, a strategy recommended in this field (Vuorikari et al., 2025).

Students rated their level of agreement with each statement using a 5-point Likert scale, where 1 corresponded to the lowest level (basic) and 5 to the highest (advanced). This format, based on self-reported items, is one of the most widely used methods to assess digital competence (Laanpere, 2019).

The reliability of the instrument was estimated using Cronbach’s alpha and McDonald’s omega coefficients, which yielded the same values in both the pretest (0.75) and the posttest (0.80). These coefficients indicate acceptable internal consistency at the initial measurement and good internal consistency after the intervention (Hair et al., 2010).

Estimation method

The estimation is based on a randomized experiment combined with a difference-in-differences (DiD) model with fixed effects. First, randomization allows participants to be assigned to the treatment group and the control group exogenously, which ensures comparability and minimizes selection bias. Based on this assignment, a difference-in-differences strategy was implemented to compare the evolution of competence levels between both groups before and after the intervention.

Given that the treatment was assigned at the group (classroom) level, standard errors were clustered at that unit. To correct for potential biases arising from the small number of clusters (five), statistical significance was evaluated using the wild cluster bootstrap-t method, as detailed in the robustness section.

The econometric specification is as follows:

Yit = δ(Treati × Postt) + βPostt + γi + ϵit

Where:

- Yit: dummy variable that takes the value 1 if the student reports the maximum level (value 5) in the competence analyzed.

- Treati×Postt: interaction between Treati y Postt.

- Treati: dummy variable that takes the value 1 if the student is in the treatment group.

- Postt: dummy variable that takes the value 1 for observations after the intervention (post-intervention questionnaire).

- γi: individual fixed effects.

The dichotomization of the dependent variable facilitates a more direct interpretation of the treatment effect by focusing on the probability of reaching the value 5, understood as a clear and demanding threshold of full competence. This definition mitigates social desirability bias by requiring the maximum response, filtering out possible moderate overestimations. Although it entails a partial loss of variance, the robustness of the results is contrasted with ordinal models (ordinal logit), presented in the corresponding section.

Baseline characteristics

| Variable |

(1) All |

(2) Treated |

(3) Control |

(4) P-value(2)-(3) |

|

| A. Student Characteristics | |||||

| Female | 0.355 | 0.356 | 0.354 | 0.968 | |

| (0.037) | (0.047) | (0.060) | |||

| Age | 20.911 | 20.808 | 21.077 | 0.823 | |

| (0.328) | (0.452) | (0.453) | |||

| (Age)^2 | 455.325 | 454.039 | 457.385 | 0.962 | |

| (20.568) | (29.661) | (24.936) | |||

| Working | 0.266 | 0.269 | 0.262 | 0.903 | |

| (0.034) | (0.044) | (0.055) | |||

| Exchange student | 0.053 | 0.058 | 0.046 | 0.691 | |

| (0.017) | (0.023) | (0.026) | |||

| Repeating student | 0.077 | 0.096 | 0.046 | 0.360 | |

| (0.021) | (0.029) | (0.026) | |||

| Online student | 0.089 | 0.067 | 0.123 | 0.760 | |

| (0.022) | (0.025) | (0.041) | |||

| Western nationality | 0.716 | 0.625 | 0.862 | 0.512 | |

| (0.035) | (0.048) | (0.043) | |||

| Pre-course economic literacy | 3.840 | 3.875 | 3.785 | 0.800 | |

| (0.14) | (0.176) | (0.232) | |||

| Pre-course digital competence4 | 0.000 | -0.297 | 0.475 | 0.141 | |

| (0.123) | (0.155) | (0.189) | |||

| B. Household Characteristics | |||||

| Income <20000€ | 0.095 | 0.096 | 0.092 | 0.853 | |

| (0.023) | (0.029) | (0.036) | |||

| Income 20000-40000€ | 0.243 | 0.269 | 0.2 | 0.124 | |

| (0.033) | (0.044) | (0.055) | |||

| Income 40000-60000€ | 0.266 | 0.269 | 0.262 | 0.846 | |

| (0.034) | (0.044) | (0.055) | |||

| Income >60000€ | 0.32 | 0.288 | 0.369 | 0.548 | |

| (0.036) | (0.045) | (0.06) | |||

| Mother - Primary education | 0.065 | 0.087 | 0.031 | 0.301 | |

| (0.019) | (0.028) | (0.022) | |||

| Mother - Secondary education | 0.420 | 0.337 | 0.554 | 0.118 | |

| (0.038) | (0.047) | (0.062) | |||

| Mother - Tertiary education | 0.515 | 0.577 | 0.415 | 0.157 | |

| (0.039) | (0.049) | (0.062) | |||

| Father - Primary education | 0.065 | 0.077 | 0.046 | 0.467 | |

| (0.019) | (0.026) | (0.026) | |||

| Father - Secondary education | 0.432 | 0.394 | 0.492 | 0.534 | |

| (0.038) | (0.048) | (0.062) | |||

| Father - Tertiary education | 0.503 | 0.529 | 0.462 | 0.652 | |

| (0.039) | (0.049) | (0.062) | |||

| Observations | 169 | 104 | 65 | ||

DATA AND DESCRIPTIVE STATISTICS

As described in the previous section, the data used come from two surveys conducted before and after the intervention.5Table 2 presents the baseline characteristics of the students randomly assigned to the treatment and control groups. The total sample consists of 169 students, of whom 104 were randomly assigned to the treatment group and 65 to the control group according to the group (classroom) to which they belonged. No statistically significant differences were detected between the groups in any of the demographic, academic, or socioeconomic variables considered, which suggests that the randomization process was appropriate and that there is an adequate balance in the initial conditions.

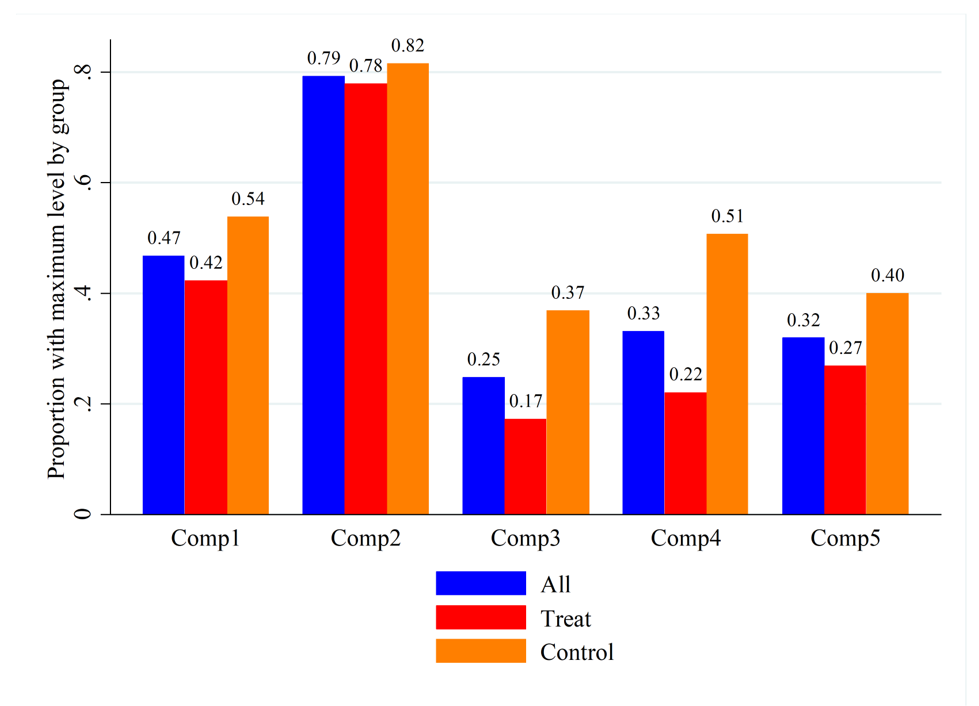

Proportion of students with the maximum level (value 5) in digital competences before the intervention

Additionally, the dimension-based analysis presented in Figure 1 reveals that the initial competence levels were heterogeneous across areas. The proportions of students who reported having reached the maximum level (value 5) ranged from 0.25 (Comp3) to 0.79 (Comp2) in the total sample, and from 0.17 to 0.78, respectively, within the treatment group. This indicates a considerable margin for improvement, especially in functional dimensions such as interaction with technological tools (Comp3), as well as in metacognitive areas related to digital self-regulation skills (Comp4) or informed decision-making in digital security (Comp5). As previous studies have pointed out, low levels of general digital competence are often associated with lower confidence and practice in digital security (Tomczyk, 2020), which could help explain the relatively lower values in these dimensions.

RESULTS AND DISCUSSION

The estimated models employ a dichotomous dependent variable that indicates whether the student reached the highest level of digital competence, which allows the coefficients (multiplied by 100) to be interpreted as changes in the probability, in percentage points (p.p.), of reaching that level.

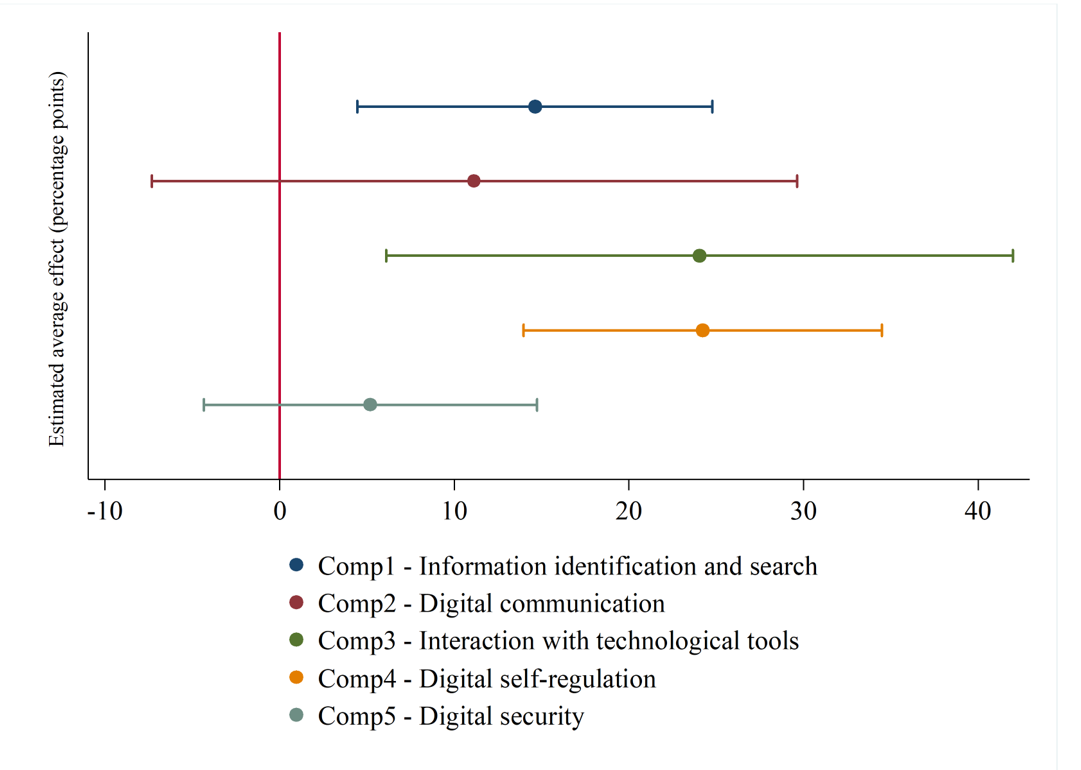

Average treatment effects on the probability of reaching the highest level of digital competence

Note: Estimated coefficients of Treat*Post, in percentage points (×100), with 95% confidence intervals. These correspond to Panel A of Table 3.

Figure 2 visually illustrates the estimated average treatment effects on the probability of reaching the highest level of digital competence. It is clearly observed that the largest gains are concentrated in the dimensions of digital self-regulation (Comp4) and interaction with technological tools (Comp3), followed by competence in information identification and search (Comp1). In contrast, competences associated with digital communication (Comp2) and digital security (Comp5) show positive estimates, although with smaller magnitude and greater statistical uncertainty. This graphical representation provides an integrated visualization of the magnitude and statistical precision of the estimated effects for each competence.

These results correspond to the coefficients presented in Table 3, where a positive and significant impact of the treatment is confirmed in three of the five dimensions analyzed: Comp1, Comp3, and Comp4. The strongest impact is observed in Comp3 and Comp4, with increases of 24 p.p., followed by Comp1 with an increase of 14.6 p.p. In contrast, competences related to digital communication (Comp2) and digital security (Comp5) show positive but not significant effects at the average level, although differentiated patterns do emerge when the initial competence level is considered.

To explore the existence of distributive effects, models with interactions were estimated based on an indicator variable that identifies students whose initial digital competence was above the median (Q2). The results reveal heterogeneity in the impacts6, with the most pronounced benefits concentrated in the subgroup with lower initial levels (i.e., below the median), as shown in Panel B of Table 3. Among students with lower initial levels of digital competence, significant improvements were observed in all competences, with increases ranging from 18 to 38 p.p. In contrast, among those with higher initial digital competence, statistically significant effects were positive in the case of digital self-regulation (Comp4), with an effect of 14 p.p., but negative in the case of digital security (Comp5). This negative result could reflect an adjustment in the self-assessment of students with higher initial levels, who, after the intervention, may have developed a more critical view of their own competences. Although the study does not provide direct empirical evidence in this regard, this pattern is consistent with the theoretical implications of the Dunning–Kruger effect, according to which increased knowledge may be accompanied by greater awareness of one’s own limitations (Dunning, 2011; Kruger & Dunning, 1999).

All of this points to a compensatory effect of the intervention in the context studied, consistent with research showing that technologies, when implemented with didactic orientation, can reduce gaps and foster more equitable learning. Recent research on generative AI highlights its potential to strengthen digital competences. Naamati-Schneider and Alt (2024) show that, in structured environments such as problem-based learning, ChatGPT promotes skills such as formulating effective prompts and critically evaluating results, emphasizing that these skills require deliberate instructional implementation. Otherwise, fundamental digital competences may be neglected. Along the same lines, Güner and Er (2025) observe that, without guidance, students use ChatGPT superficially, whereas training in prompting and strategic use stimulates more reflective and autonomous interactions. Finally, experimental studies and meta-analyses (Wang et al., 2025; Wang & Fan, 2025) confirm that personalized feedback from ChatGPT improves motivation, reflection, and performance in complex tasks, supporting the development of advanced cognitive and digital skills.

The findings are especially relevant for the improvement of metacognitive competences such as digital self-regulation (Comp4). Lin et al. (2025) point out that the guided use of tools such as ChatGPT facilitates autonomous planning, the organization of ideas, and the formulation of questions by mitigating limitations in working memory and information structuring. However, they caution that such support may generate dependence on automated feedback, limiting critical autonomy. Hence the need to accompany AI with didactic strategies that promote active appropriation.

Although studies such as Hillmayr et al. (2020) focus on lower educational levels, their findings on the positive effects of technologies on self-regulation and problem-solving could be extrapolated to higher education, particularly among students with lower levels of competence.

The pattern observed in our results, with greater benefits among students with lower initial levels, suggests that these technologies, when implemented with didactic intentionality, can play a compensatory role. This leveling effect is particularly evident in key dimensions such as digital self-regulation (Comp4) and interaction with technological tools (Comp3), which are essential in higher education contexts that demand increasing autonomy and environments oriented toward the acquisition of digital competences linked to the critical use of AI. This is consistent with the literature arguing that generative AI, as personalized support, can perform functions equivalent to intelligent tutoring systems without replacing the educator (Hwang et al., 2020).

In the current educational landscape, the need to strengthen competences that enable students to adapt to dynamic and uncertain environments stands out, particularly those related to problem-solving, considered key to lifelong learning (Csapó & Funke, 2017; OECD, 2013; Rahman, 2019).

Results of the linear difference-in-differences models with fixed effects (Estimated coefficients on the probability of reaching the highest level of digital competence)

|

(1) Comp1 |

(2) Comp2 |

(3) Comp3 |

(4) Comp4 |

(5) Comp5 |

|

| A. Average | |||||

| Treat*Post | 0.146** | 0.112 | 0.240** | 0.242*** | 0.052 |

| (0.037) | (0.067) | (0.065) | (0.037) | (0.034) | |

| R2 | 0.017 | 0.012 | 0.055 | 0.038 | 0.004 |

| B. Pre-course digital competences above the median Q2 | |||||

| Treat*Post | 0.374*** | 0.209** | 0.294*** | 0.318*** | 0.178*** |

| (0.041) | (0.05) | (0.045) | (0.057) | (0.02) | |

| Treat*Post*Q2 | -0.539*** | -0.230* | -0.126 | -0.179 | -0.298*** |

| (0.105) | (0.108) | (0.082) | (0.095) | (0.009) | |

| Treat*Post+Treat*Post*Q2 | -0.165 | -0.021 | 0.168 | 0.139* | -0.12** |

| (0.092) | (0.133) | (0.097) | (0.065) | (0.027) | |

| R2 | 0.151 | 0.039 | 0.061 | 0.05 | 0.043 |

| N | 338 | 338 | 338 | 338 | 338 |

Robustness of the results

To assess the robustness of the results of the linear DiD models (Table 3), ordinal logit models were estimated under the same strategy. These models, which account for the ordinal nature of the dependent variable, allow the calculation of marginal effects on the probability of reaching the highest level of digital competence (value 5). The results (Table 4) confirm the validity of the estimates by showing consistent effects.

On average (Panel A), the treatment shows positive and significant effects across all dimensions (13–28 p.p.), especially in Comp4 and Comp3. The pattern is accentuated among students with lower initial levels (Panel B), who exhibit greater improvements (21–36 p.p.), whereas higher-level students also benefit, albeit to a lesser extent (17–27 p.p.). The negative and significant interactions reinforce this difference.

The differences between models stem from the way the dependent variable is specified: the linear model operates on a dichotomous version, whereas the ordinal model considers the entire scale and captures non-linear effects. Both coincide with the key patterns, with positive effects especially among students with lower initial levels. The linear model is preferred for its interpretative clarity and control of unobserved heterogeneity through fixed effects.7 The coherence across approaches reinforces the robustness of the conclusions.

Results of the ordinal logit difference-in-differences models (Marginal effects on the probability of reaching the highest level of digital competence)

|

(1) Comp1 |

(2) Comp2 |

(3) Comp3 |

(4) Comp4 |

(5) Comp5 |

|

| A. Average | |||||

| Treat*Post | 0.144*** | 0.126*** | 0.175*** | 0.281*** | 0.160*** |

| (0.000) | (0.002) | (0.008) | (0.003) | (0.008) | |

| R2 | 0.02 | 0.02 | 0.021 | 0.028 | 0.02 |

| B. Pre-course digital competences above the median Q2 | |||||

| Treat*Post | 0.211*** | 0.209*** | 0.227*** | 0.364*** | 0.302*** |

| (0.001) | (0.000) | (0.004) | (0.003) | (0.003) | |

| Treat*Post*Q2 | -0.247*** | -0.253*** | -0.151*** | -0.233*** | -0.330*** |

| (0.001) | (0.002) | (0.002) | (0.095) | (0.005) | |

| Treat*Post+Treat*Post*Q2 | 0.188*** | 0.170*** | 0.185*** | 0.273*** | 0.183*** |

| (0.011) | (0.012) | (0.009) | (0.008) | (0.017) | |

| R2 | 0.111 | 0.081 | 0.098 | 0.042 | 0.102 |

| N | 338 | 338 | 338 | 338 | 338 |

In addition to the consistency observed in the alternative models (ordinal logit), the robustness of the results has been verified from the perspective of statistical inference. As detailed in the methodological section, since treatment assignment was carried out at the group (classroom) level, with a total of five clusters, the statistical significance of the coefficients was evaluated using the wild cluster bootstrap-t method proposed by Cameron et al. (2008), implemented with Webb (2014) weights and 1,000 replications.8 The main results remain stable under this procedure and the overall conclusions are not affected, which supports the validity of the estimates.

CONCLUSIONS AND RECOMMENDATIONS

This study provides empirical evidence of the positive impact of a didactic intervention, focused on the use of generative artificial intelligence and specifically aimed at developing digital competences among higher education students. The analysis reveals significant average effects in three of the five dimensions evaluated within the DigComp framework: information identification and search (Comp1), interaction with technological tools (Comp3), and digital self-regulation (Comp4), with increases ranging from 14.6 to 24 p.p.

These advances are relevant not only because of their magnitude but also due to the type of competences involved. In particular, the increase in “information and data literacy” (Comp1) stands out in a context where the abundance of content generated by automated systems requires critical skills to filter, verify, and cross-check information. This finding reinforces the central role of this competence in the digital citizenship and autonomous learning frameworks proposed by Vuorikari et al. (2025).

Regarding Comp3 and Comp4, the results show significant average improvements in both the functional use of technology and the metacognitive dimension of digital learning. These competences are particularly critical in higher education contexts that require greater student autonomy. Likewise, the analysis of heterogeneous effects reveals a consistent pattern: the greatest benefits are concentrated among those starting from lower initial levels, suggesting that the intervention had a leveling effect. In these cases, significant improvements were observed across all competences, reinforcing the compensatory potential of such strategies.

The findings suggest the need to revisit the widespread idea that university students, due to their familiarity with technology, possess well-developed digital skills. As previous research has emphasized, being a ‘digital native’ does not guarantee competent or critical use of technological tools. On the contrary, the results highlight the need for explicit, well-designed, and guided didactic interventions that systematically address the acquisition of these skills (Gallardo-Echenique et al., 2015; Ng, 2012).

Taken together, this study contributes to the emerging field of generative AI in education by providing quantitative evidence of how its didactic integration can strengthen both functional and metacognitive competences. These results support the intentional use of emerging technologies not only as instructional resources but also as tools to reduce inequalities and foster more equitable and inclusive learning environments across similar contexts, aligned with the principles of lifelong learning and the European standards for digital competences.

Finally, the results underscore the importance of educators actively participating in the didactic integration of these technologies. This requires educators with strong digital competences and an open attitude toward change, capable of adapting their practices to evolving educational contexts (Inamorato dos Santos et al., 2023). Moreover, given that the impact varies according to students’ initial levels, their implementation must be guided by instructional criteria that ensure effective and ethical use aimed at closing gaps. Therefore, harnessing the possibilities offered by generative AI in education not only entails introducing new tools, but also rethinking the conditions that enable these technologies to contribute to a more equitable education, better adapted to contemporary challenges.

Nevertheless, these results must be interpreted in light of certain limitations. First, the sample is composed exclusively of students from a single university, which limits the generalizability of the findings to other educational contexts. Future research could broaden the sample by including different institutions and courses, which would allow for the evaluation of the transferability and scalability of the intervention. Second, although assignment to the treatment was random, it was carried out at the classroom level for didactic reasons and to avoid spillovers, which reduced the number of clusters and may entail some risk of ex-ante self-selection. To address this, the analyses incorporated resampling-based inference, as well as prior balance tests (Table 2), which show a balanced distribution of observable characteristics across conditions. Individual fixed effects were also included in the difference-in-differences models to control for potential biases associated with time-invariant unobserved factors. This approach strengthens causal interpretation, although it does not replace the evidence that could be provided by additional studies. Third, the dependent variables come from self-reported questionnaires, which may introduce perception or social desirability biases. To mitigate these potential biases, we defined the variable of interest strictly (only those who reported the maximum level of competence), to avoid moderate overestimations. Future studies could complement this with more objective measures, such as performance rubrics or the analysis of digital artifacts.

REFERENCES

Arseven, T., & Bal, M. (2025). Critical literacy in artificial intelligence-assisted writing instruction: A systematic review. Thinking Skills and Creativity, 57, 101850. https://doi.org/10.1016/j.tsc.2025.101850

Barak, M. (2018). Are digital natives open to change? Examining flexible thinking and resistance to change. Computers & Education, 121, 115-123. https://doi.org/10.1016/j.compedu.2018.01.016

Benvenuti, M., Cangelosi, A., Weinberger, A., Mazzoni, E., Benassi, M., Barbaresi, M., & Orsoni, M. (2023). Artificial intelligence and human behavioral development: A perspective on new skills and competences acquisition for the educational context. Computers in Human Behavior, 148, 107903. https://doi.org/10.1016/j.chb.2023.107903

Bastian, A., Liza, L. O., & Efastri, S. M. (2023). Revolutionizing education: How digital literacy is transforming inclusive classrooms in post-COVID-19. Journal of Public Health, 45(3), e609-e610. https://doi.org/10.1093/pubmed/fdad058

Blau, I., Shamir-Inbal, T., & Avdiel, O. (2020). How does the pedagogical design of a technology-enhanced collaborative academic course promote digital literacies, self-regulation, and perceived learning of students? The Internet and Higher Education, 45, 100722. https://doi.org/10.1016/j.iheduc.2019.100722

Buchholz, B. A., DeHart, J., & Moorman, G. (2020). Digital citizenship during a global pandemic: Moving beyond digital literacy. Journal of Adolescent & Adult Literacy, 64(1), 11-17. https://doi.org/10.1002/jaal.1076

Cameron, A. C., Gelbach, J. B., & Miller, D. L. (2008). Bootstrap-based improvements for inference with clustered errors. The Review of Economics and Statistics, 90(3), 414-427. https://doi.org/10.1162/rest.90.3.414

Clifford, I., Kluzer, S., Troia, S., Jakobsone, M., Zandbergs, U., Vuorikari, R., Punie, Y., Castaño-Muñoz, J., Mediavilla, C., O’Keeffe, W., & Cabrera, M. (2020). DigCompSAT: A self-reflection tool for the European Digital Competence Framework for citizens (JRC Technical Report). Publications Office of the European Union. https://doi.org/10.2760/77437

Csapó, B., & Funke, J. (Eds.). (2017). The nature of problem solving: Using research to inspire 21st century learning. OECD Publishing. https://doi.org/10.1787/9789264273955-en

Dalgıç, A., Yaşar, E., & Demir, M. (2024). ChatGPT and learning outcomes in tourism education: The role of digital literacy and individualized learning. Journal of Hospitality, Leisure, Sport & Tourism Education, 34, 100481. https://doi.org/10.1016/j.jhlste.2024.100481

Dimitriadou, E., & Lanitis, A. (2023). A critical evaluation, challenges, and future perspectives of using artificial intelligence and emerging technologies in smart classrooms. Smart Learning Environments, 10(1), Article 12. https://doi.org/10.1186/s40561-023-00231-3

Dunning, D. (2011). The Dunning–Kruger effect: On being ignorant of one’s own ignorance. In J. M. Olson & M. P. Zanna (Eds.), Advances in Experimental Social Psychology (Vol. 44, pp. 247-296). https://doi.org/10.1016/B978-0-12-385522-0.00005-6

European Commission, Directorate-General for Education, Youth, Sport and Culture (2019). Key competences for lifelong learning. Publications Office of the European Union. https://doi.org/10.2766/569540

Ferrari, A., & Punie, Y. (2013). DIGCOMP: A framework for developing and understanding digital competence in Europe. Publications Office of the European Union. https://doi.org/10.2788/52966

Gallardo-Echenique, E. E., de Oliveira, J. M., Marqués-Molias, L., Esteve-Mon, F., Wang, Y., & Baker, R. (2015). Digital competence in the knowledge society. MERLOT Journal of Online Learning and Teaching, 11(1). https://jolt.merlot.org/vol11no1/Gallardo-Echenique_0315.pdf

García Peñalvo, F. J., Llorens-Largo, F., & Vidal, J. (2024). La nueva realidad de la educación ante los avances de la inteligencia artificial generativa. RIED-Revista Iberoamericana de Educación a Distancia, 27(1), 9-39. https://doi.org/10.5944/ried.27.1.37716

Greene, W. (2004). The behaviour of the maximum likelihood estimator of limited dependent variable models in the presence of fixed effects. The Econometrics Journal, 7(1), 98-119. https://doi.org/10.1111/j.1368-423X.2004.00123.x

Güner, H., & Er, E. (2025). AI in the classroom: Exploring students’ interaction with ChatGPT in programming learning. Education and Information Technologies, 30, 12681–12707. https://doi.org/10.1007/s10639-025-13337-7

Gutiérrez Porlán, I., & Serrano Sánchez, J. L. (2016). Evaluation and development of digital competence in future primary school teachers at the University of Murcia. Journal of New Approaches in Educational Research, 5(1), 51-56. https://doi.org/10.7821/naer.2016.1.152

Hair, J. F., Jr., Black, W. C., Babin, B. J., & Anderson, R. E. (2010). Multivariate data analysis (7th ed.). Prentice Hall.

Hillmayr, D., Ziernwald, L., Reinhold, F., Hofer, S. I., & Reiss, K. M. (2020). The potential of digital tools to enhance mathematics and science learning in secondary schools: A context-specific meta-analysis. Computers & Education, 153, 103897. https://doi.org/10.1016/j.compedu.2020.103897

Hwang, G. J., Xie, H., Wah, B. W., & Gašević, D. (2020). Vision, challenges, roles and research issues of artificial intelligence in education. Computers and Education: Artificial Intelligence, 1, 100001. https://doi.org/10.1016/j.caeai.2020.100001

Inamorato dos Santos, A., Chinkes, E., Carvalho, M. A. G., Solórzano, C. M. V., & Marroni, L. S. (2023). The digital competence of academics in higher education: Is the glass half empty or half full? International Journal of Educational Technology in Higher Education, 20, Article 9. https://doi.org/10.1186/s41239-022-00376-0

Kasneci, E., Sessler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F., Gasser, U., Groh, G., Günnemann, S., Hüllermeier, E., Krusche, S., Kutyniok, G., Michaeli, T., Nerdel, C., Pfeffer, J., Poquet, O., Sailer, M., Schmidt, A., Seidel, T., … Kasneci, G. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences, 103, 102274. https://doi.org/10.1016/j.lindif.2023.102274

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it: How difficulties in recognizing one’s own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77(6), 1121-1134. https://doi.org/10.1037/0022-3514.77.6.1121

Laanpere, M. (2019). Recommendations on assessment tools for monitoring digital literacy within UNESCO’s Digital Literacy Global Framework (Information Paper No. 56; UIS/2019/LO/IP/56). UNESCO Institute for Statistics. https://unesdoc.unesco.org/ark:/48223/pf0000366740

Laupichler, M. C., Aster, A., Schirch, J., & Raupach, T. (2022). Artificial intelligence literacy in higher and adult education: A scoping literature review. Computers and Education: Artificial Intelligence, 3, 100101. https://doi.org/10.1016/j.caeai.2022.100101

Lee, D., & Palmer, E. (2025). Prompt engineering in higher education: A systematic review to help inform curricula. International Journal of Educational Technology in Higher Education, 22, Article 7. https://doi.org/10.1186/s41239-025-00503-7

Lee, D., Arnold, M., Srivastava, A., Plastow, K., Strelan, P., Ploeckl, F., Lekkas, D., & Palmer, E. (2024). The impact of generative AI on higher education learning and teaching: A study of educators’ perspectives. Computers and Education: Artificial Intelligence, 6, 100221. https://doi.org/10.1016/j.caeai.2024.100221

Li, Y., & Ranieri, M. (2010). Are ‘digital natives’ really digitally competent?—A study on Chinese teenagers. British Journal of Educational Technology, 41(6), 1029-1042. https://doi.org/10.1111/j.1467-8535.2009.01053.x

Lin, C. J., Lee, H. Y., Wang, W. S., Huang, Y. M., & Wu, T. T. (2025). Enhancing reflective thinking in STEM education through experiential learning: The role of generative AI as a learning aid. Education and Information Technologies, 30(5), 6315-6337. https://doi.org/10.1007/s10639-024-13072-5

List, A. (2019). Defining digital literacy development: An examination of pre-service teachers’ beliefs. Computers & Education, 138, 146-158. https://doi.org/10.1016/j.compedu.2019.03.009

Mattar, J., Ramos, D. K., & Lucas, M. R. (2022). DigComp-based digital competence assessment tools: Literature review and instrument analysis. Education and Information Technologies, 27(8), 10843-10867. https://doi.org/10.1007/s10639-022-11034-3

Moravec, V., Hynek, N., Gavurová, B., & Rigelský, M. (2024). Who uses it and for what purpose? The role of digital literacy in ChatGPT adoption and utilisation. Journal of Innovation & Knowledge, 9(4), 100602. https://doi.org/10.1016/j.jik.2024.100602

Morgan, A., Sibson, R., & Jackson, D. (2022). Digital demand and digital deficit: Conceptualising digital literacy and gauging proficiency among higher education students. Journal of Higher Education Policy and Management, 44(3), 258-275. https://doi.org/10.1080/1360080X.2022.2030275

Naamati-Schneider, L., & Alt, D. (2024). Beyond digital literacy: The era of AI-powered assistants and evolving user skills. Education and Information Technologies, 29(16), 21263-21293. https://doi.org/10.1007/s10639-024-12694-z

Ng, W. (2012). Can we teach digital natives digital literacy? Computers & Education, 59(3), 1065-1078. https://doi.org/10.1016/j.compedu.2012.04.016

OECD. (2013). PISA 2012 assessment and analytical framework: Mathematics, reading, science, problem solving and financial literacy. OECD Publishing. https://doi.org/10.1787/9789264190511-en

OECD. (2023). OECD Digital Education Outlook 2023: Towards an effective digital education ecosystem. OECD Publishing. https://doi.org/10.1787/c74f03de-en

Polizzi, G. (2020). Digital literacy and the national curriculum for England: Learning from how the experts engage with and evaluate online content. Computers & Education, 152, 103859. https://doi.org/10.1016/j.compedu.2020.103859

Popenici, S. A. D., & Kerr, S. (2017). Exploring the impact of artificial intelligence on teaching and learning in higher education. Research and Practice in Technology Enhanced Learning, 12(1), 22. https://doi.org/10.1186/s41039-017-0062-8

Prensky, M. (2001). Digital natives, digital immigrants. On the Horizon, 9(5), 1-6. https://doi.org/10.1108/10748120110424816

Prior, D. D., Mazanov, J., Meacheam, D., Heaslip, G., & Hanson, J. (2016). Attitude, digital literacy and self-efficacy: Flow-on effects for online learning behavior. The Internet and Higher Education, 29, 91-97. https://doi.org/10.1016/j.iheduc.2016.01.001

Rahman, M. M. (2019). 21st century skill “problem solving”: Defining the concept. Asian Journal of Interdisciplinary Research, 2(1), 64-74. https://doi.org/10.34256/ajir1917

Saklaki, A., & Gardikiotis, A. (2024). Exploring Greek students’ attitudes toward artificial intelligence: Relationships with AI ethics, media, and digital literacy. Societies, 14(12), 248. https://doi.org/10.3390/soc14120248

Selwyn, N. (2009). The digital native–myth and reality. Aslib Proceedings: New Information Perspectives, 61(4), 364-379. https://doi.org/10.1108/00012530910973776

Ting, Y.-L. (2015). Tapping into students’ digital literacy and designing negotiated learning to promote learner autonomy. The Internet and Higher Education, 26, 25-32. https://doi.org/10.1016/j.iheduc.2015.04.004

Tomczyk, Ł. (2020). Skills in the area of digital safety as a key component of digital literacy among teachers. Education and Information Technologies, 25(1), 471-486. https://doi.org/10.1007/s10639-019-09980-6

Van Laar, E., van Deursen, A. J. A. M., van Dijk, J. A. G. M., & de Haan, J. (2020). Determinants of 21st-century skills and 21st-century digital skills for workers: A systematic literature review. Sage Open, 10(1), 1-14. https://doi.org/10.1177/2158244019900176

Vuorikari, R., Kluzer, S., & Punie, Y. (2022). DigComp 2.2: The digital competence framework for citizens – with new examples of knowledge, skills and attitudes [EUR 31006 EN; JRC128415]. Publications Office of the European Union. https://doi.org/10.2760/115376

Vuorikari, R., Pokropek, A., & Castaño Muñoz, J. (2025). Enhancing digital skills assessment: Introducing compact tools for measuring digital competence. Technology, Knowledge and Learning. Advance online publication. https://doi.org/10.1007/s10758-025-09825-x

Walstad, W. B., Rebeck, K., & Butters, R. B. (2013). Test of Economic Literacy: Examiner’s manual (4th ed.). Council for Economic Education. https://www.econedlink.org/wp-content/uploads/2018/09/TEL-Manual.pdf

Walstad, W. B., Watts, M., & Rebeck, K. (2007). Test of Understanding of College Economics: Examiner’s manual (4th ed.). Council for Economic Education. https://www.econedlink.org/wp-content/uploads/2018/09/TUCE-4th.pdf

Wang, J., & Fan, W. (2025). The effect of ChatGPT on students’ learning performance, learning perception, and higher-order thinking: Insights from a meta-analysis. Humanities and Social Sciences Communications, 12, 621. https://doi.org/10.1057/s41599-025-04787-y

Wang, W.-S., Lin, C.-J., Lee, H.-Y., Huang, Y.-M., & Wu, T.-T. (2025). Integrating feedback mechanisms and ChatGPT for VR-based experiential learning: Impacts on reflective thinking and AIoT physical hands-on tasks. Interactive Learning Environments, 33(2), 1770-1787. https://doi.org/10.1080/10494820.2024.2375644

Webb, M. D. (2014). Reworking wild bootstrap based inference for clustered errors (QED Working Paper No. 1315). Queen’s University, Department of Economics. https://qed.econ.queensu.ca/working_papers/papers/qed_wp_1315.pdf

Zhai, X., Chu, X., Chai, C. S., Jong, M. S. Y., Istenič, A., Spector, J. M., Liu, J., Yuan, J., & Li, Y. (2021). A review of artificial intelligence (AI) in education from 2010 to 2020. Complexity, 2021, 8812542. https://doi.org/10.1155/2021/8812542

Zhao, Y., Pinto Llorente, A. M., & Sánchez Gómez, M. C. (2021). Digital competence in higher education research: A systematic literature review. Computers & Education, 168, 104212. https://doi.org/10.1016/j.compedu.2021.104212

Notes

Reception: 01 July 2025

Accepted: 09 September 2025

OnlineFirst: 24 October 2025

Publication: 01 January 2026