Estudios e investigaciones

Self-perception and usefulness of generative artificial intelligence among pre-service teachers

Autopercepción y utilidad de la inteligencia artificial generativa en docentes en formación

Self-perception and usefulness of generative artificial intelligence among pre-service teachers

RIED-Revista Iberoamericana de Educación a Distancia, vol. 29, núm. 1, pp. 111-132, 2026

Asociación Iberoamericana de Educación Superior a Distancia

Esta obra está bajo una Licencia Creative Commons Atribución-NoComercial 4.0 Internacional.

How to cite: Pinto-Llorente, A. M., Izquierdo-Álvarez, V., &

Dolcet-Negre, M. M. (2026). Self-perception and usefulness of generative artificial intelligence among

pre-service teachers [Autopercepción y utilidad de la inteligencia artificial generativa en docentes en

formación]. RIED-Revista Iberoamericana de Educación a Distancia, 29(1),

111-132. https://doi.org/10.5944/ried.45480

Abstract: The advent of Generative Artificial Intelligence (GenAI) in education presents opportunities, but it also raises ethical and pedagogical challenges. In this context, it is imperative to comprehend how pre-service teachers perceive this technology. The present study analysed the self-perception of 174 pre-service teachers regarding the application of GenAI in education. Seven dimensions (Familiarity, Relevance, Practical Skills, Barriers, Confidence, Ethical-Social Impact, and Expectations) were measured in relation to GenAI. In addition, the usefulness of ChatGPT as a tool for designing Learning Situations (LSs) was assessed after a training experience with this system. Descriptive statistics and Spearman correlations were calculated, and a network of correlations between the seven dimensions was visualised. Differences between degrees were also explored. The findings indicated medium-to-high levels of self-perception, suggesting a very positive evaluation of ChatGPT's usefulness and a high level of satisfaction with its use. Confidence emerged as a central node in the correlation network, exhibiting close associations with Relevance, Barriers, Ethical-social impact, and Expectations. This underscores its pivotal role in the adoption of these technologies. Similarly, most participants adopted a critical stance towards GenAI, checking the responses generated by ChatGPT rather than passively accepting them. In conclusion, while there is a favourable attitude towards integrating GenAI into education, future teachers demand specific training to use it pedagogically and express concern about the ethical implications of such integration.

Keywords: generative artificial intelligence, ChatGPT, teacher training, self-perception, usefulness, education.

Resumen: La irrupción de la Inteligencia Artificial Generativa (IA-Gen) en el ámbito educativo ofrece oportunidades, pero también plantea desafíos éticos y pedagógicos. En este contexto, resulta fundamental comprender la percepción de los docentes en formación hacia esta tecnología. Este estudio analizó la autopercepción de 174 docentes en formación sobre la IA-Gen aplicada a la educación. Se midieron siete dimensiones (Familiaridad, Relevancia, Habilidades prácticas, Barreras, Confianza, Impacto ético-social y Expectativas) en referencia a la IA-Gen y se valoró la utilidad de ChatGPT como herramienta para diseñar Situaciones de Aprendizaje (SdAs) tras una experiencia formativa con este sistema. Se calcularon estadísticos descriptivos, correlaciones de Spearman, se visualizó una red de correlaciones entre las siete dimensiones y se exploraron diferencias entre las titulaciones. Los resultados revelan niveles medios-altos de autopercepción con valoración muy positiva de la utilidad de ChatGPT y un alto nivel de satisfacción con su uso. La Confianza emergió como un nodo central en la red de correlaciones, vinculándose estrechamente con la Relevancia, Barreras, Impacto ético-social y Expectativas, lo que resalta su papel clave en la adopción de estas tecnologías. Asimismo, la mayoría de los participantes adoptó una actitud crítica ante la IA-Gen, contrastando las respuestas generadas por ChatGPT en lugar de aceptarlas pasivamente. En conclusión, aunque se observa una disposición favorable hacia la integración de la IA-Gen en educación, los futuros docentes demandan formación específica para su uso pedagógico y expresan preocupación por las implicaciones éticas de dicha integración.

Palabras clave: inteligencia artificial generativa, ChatGPT, formación docente, autopercepción, utilidad, educación.

INTRODUCTION

Since the launch of ChatGPT, Generative Artificial Intelligence (GenAI) has undergone rapid development, leading to a notable increase in scientific output across various disciplines, including health (Currie, 2025; Tai-Han et al., 2024; Denny et al., 2024; Gozalo-Brizuela & Garrido-Merchán, 2024; Storey, 2025), computer science (Gozalo-Brizuela & Garrido-Merchán, 2024; Storey, 2025), or education (Carranza Alcántar, 2024; Haroud & Saqri, 2025; Sánchez-Prieto et al., 2025), demonstrating its cross-cutting impact in multiple fields of knowledge.

In education, GenAI has emerged as a disruptive technology capable of redefining teaching and learning processes. Its gradual incorporation into various contexts has fostered applications such as personalised learning experiences allowing the adaptation of content, activities, and explanations to the level and cognitive development of students, promoting more active, autonomous, and contextualised learning (Carranza Alcántar et al., 2024). Furthermore, it is employed in the design of teaching materials, lesson planning, and task diversification, optimizing time, augmenting the resources available (Bayly-Castaneda et al., 2024) and contributing to educational innovation and Universal Design for Learning (Alba Pastor, 2022). Likewise, its use in academic writing is also noteworthy (Moorhouse & Kohnke, 2024) as well as the provision of automated feedback on student performance, promoting more autonomous and reflective learning (Haroud & Saqri, 2025). Finally, it is noteworthy its applicability in developing digital and metacognitive competences, which are essential to prepare students for a work environment in which GenAI already has a significant presence (Islam & Greenwood, 2024).

The emergence of GenAI in education has opened up important debates on academic integrity and the possible delegation of cognitive functions. In response, several studies propose ethical and pedagogical frameworks that promote a formative and responsible use (Haroud & Saqri, 2025). Considering this challenge, the role of teachers and their AI competences are crucial to maximize its educational potential and mitigate its risks (Alasadi & Baiz, 2023; Nyaaba & Zhai, 2024; Zhang & Villanueva, 2023). In this regard, the AI competency framework for teachers (AI CFT) (Miao & Cukurova, 2024) is particularly relevant, as it aims to safeguard teachers’ rights and foster their continuing professional development in the era of AI. This model organises AI competences into five key dimensions: human-centred mindset, Ethics of AI, AI foundations and applications, AI pedagogy, and AI for professional development. Its goal is to ensure that teachers are prepared to use AI safely, effectively, and ethically, while minimising potential risks to students and society.

The current challenge lies in confronting a technology capable of mimicking human behaviour even to the point of questioning human agency (Mouta et al., 2025). Through the analysis of large amounts of data, AI can replicate, or even replace, human decision-making, including teachers’ professional autonomy. This reality demands solid preparation and continuous support for teachers to ensure proper educational use. Consequently, it is essential to incorporate AI training into pre-service teacher education curricula (Ishmuradova et al., 2025), providing them with theoretical foundations and practical competences applicable in the classroom, and preparing them to face current and future educational challenges (Laupichler et al., 2022). Educational policies, therefore, play a key role in this transformation (Al-Abdullatif & Alsubaie, 2024).

Recent research highlights the growing interest in understanding how future teachers perceive and integrate GenAI into their training. Studies concur in emphasising its potential as a support tool for the design of teaching proposals and materials (Ishmuradova et al., 2025; Lozano & Blanco, 2023; Markos et al., 2024), and underline benefits associated with the development of professional competences, conceptual understanding, pedagogical adaptation, and critical awareness of its educational use (Moorhouse et al., 2024). However, common concerns also emerge regarding the reliability of generated information, potential technological dependence, the impact on basic cognitive skills, and ethical risks related to plagiarism or academic integrity (Nyaaba & Zhai, 2024), underscoring the need for critical, ethical, and pedagogically grounded training. Despite these advances, previous studies still show relevant limitations, as descriptive or exploratory approaches predominately analyse individual perceptions, with little attention to the relational structure among the different dimensions involved in the pedagogical integration of GenAI. In this regard, the present study seeks to overcome this fragmented logic by adopting a structural modelling approach through network analysis, enabling the modelling of interrelationships among key variables such as familiarity, relevance, barriers, confidence, and ethical impact, thereby providing a more comprehensive and structured understanding of the phenomenon.

Research emphasises the need to conduct studies in different educational institutions and cultural contexts that explore future teachers’ perceptions of their knowledge of GenAI in order to gain a better understanding of the role of this technology (Markos et al., 2024). The present study focuses on analysing pre-service teachers' self-perceptions in relation to their Familiarity with GenAI, its educational Relevance, the associated Practical Skills, the perceived Barriers, their level of Confidence, the Ethical and Social Impact, and their Usage Expectations. Self-perception is understood as the assessment and understanding that one has of oneself in a specific domain (Aravena Castillo, 2013). Here it refers to the evaluation that pre-service teachers make of their familiarity, competences, beliefs, and expectations regarding the use of GenAI in education. This approach allows representing the interrelationships among self-perception dimensions, offering an integrated and novel perspective that identifies which factors occupy central positions and how they are clustered, adding value to the state of the art. Within this framework, the study presents a pilot experience based on the application of ChatGPT in the design of Learning Situations (LSs) to assess its usefulness.

The article is organised into five sections. The first outlines the theoretical foundation of the study. The Method section then describes the approach, participants, instrument, and the procedures for data collection and analysis. The results are subsequently presented, followed by a discussion of the findings. Finally, the article concludes with recommendations for teachers and institutions, theoretical and practical implications, and possible future lines for research and knowledge transfer.

METHOD

This study adopts a quantitative approach, appropriate for the collection and analysis of measurable data (Hernández-Sampieri & Mendoza-Torres, 2023). It is framed within an evaluative research design, which is relevant when the aim is to assess processes or interventions in educational contexts (Arias, 2012), in this case, pre-service teachers’ self-perception of GenAI and their satisfaction with the experience of using ChatGPT in the design of LSs.

The following objectives (O) are set out:

- O1. To analyse pre-service teachers' self-perception of GenAI in relation to Familiarity, Relevance, Practical Skills, Barriers, Confidence, Ethical and Social Impact, and Expectations.

- O2. To determine how pre-service teachers rate the usefulness of ChatGPT as a support tool for LS design.

The first responds to the need, identified in previous studies, to gain a comprehensive understanding of how future teachers conceive GenAI across various dimensions, beyond isolated cases (Markos et al., 2024). The second arises from the growing incorporation of GenAI tools in education and the lack of evidence regarding how pre-service teachers perceive their pedagogical usefulness (Nyaaba & Zhai, 2024).

Based on these objectives, the following research questions (RQ) were formulated:

- RQ1. What is the self-perception of pre-service teachers regarding GenAI in relation to their Familiarity with it, its perceived Relevance, their Practical Skills, the Barriers they face, their Confidence, its Ethical and Social Impact, and their Expectations?

- RQ2. How do pre-service teachers rate the usefulness of ChatGPT as a support tool for LS design?

The experience developed aimed to explore the pedagogical potential of ChatGPT in designing LSs for Early Childhood and Primary Education stages. Participants mainly used ChatGPT-3.5, with limited access to ChatGPT-4 depending on service availability. The experience was organised into three two-hour practical sessions held over three weeks. The first had an introductory character, aimed at familiarising students with the educational use of GenAI and the formulation of effective prompts. Prompts were provided in logical sequences that facilitated the progressive construction of proposals, as well as review and analysis prompts to examine, adjust, and improve the generated productions, fostering pedagogical coherence, evidence-based decision-making, and continuous improvement of the design. Teaching support was provided throughout to address technical issues and promote critical thinking and informed decision-making. Quality criteria were established to evaluate the generated responses, focusing on discursive coherence, accuracy of information, and curricular alignment. Through an active learning approach, students explored the educational use of GenAI and strengthened their digital competences in an innovative environment.

Participants

A non-probability convenience sampling method was employed for the selection of the sample, justified by the direct access to participants and the relevance of the content. Students who did not complete the experience were excluded. This type of sampling may involve certain biases, particularly those related to motivation or technological familiarity. The sample size (n = 185) was determined by the number of enrolled students. A total of 174 students from the University of Salamanca, enrolled in education-related degree programmes, participated in the study. Specifically, 37 were enrolled in the bachelor's degree in Early Childhood Education (21.26%), 58 in the bachelor's degree in Primary Education (33.33%), 19 were enrolled in a double degree programme in Primary Education and Early Childhood Education (10.91%), 27 were enrolled in a master's degree in Innovation in Specific Teaching Methods for Early Childhood and Primary Education (15.51%), and 33 were enrolled in a master's degree in ICT in Education: Analysis and Design of Processes, Resources and Training Practices (18.96%).

Student participation took place across different courses related to technology and educational innovation. Bachelor’s degree students participated in the course ICT in Education; students in the master's degree in Innovation in the course Advances in Digital Technologies for Educational Innovation; and students of the master's degree in ICT in Education in the course Research Lines in Educational Technology.

In terms of participant characteristics, the group comprised 38 men (21.84%) and 136 women (78.16%), aged between 17 and 47, with an average age of 21.02 years and a standard deviation of 4.13 years.

Instrument

The instrument utilised to collect data was a questionnaire that had been validated in the research conducted by Espinoza-San Juan et al. (2024). It was developed using Google Forms and distributed to participants electronically via the virtual campus (Table 1). It was divided into three sections. The initial section collected sociodemographic data, including educational qualifications, age and sex. The second scale comprised 22 items and seven dimensions associated with self-perception of GenAI in education: Familiarity, Relevance, Practical Skills, Barriers, Confidence, Ethical and Social Impact, and Expectations. Each dimension comprised between two and four Likert-type items, with a response scale ranging from 1 (strongly disagree) to 5 (strongly agree). The third section amassed assessments of the practicality of ChatGPT in the design of LSs through five items: two Likert-type questions on Satisfaction and Perceived usefulness, and three multiple-choice items on the Quality of the responses generated, the Attitude adopted by the participant, and Training.

Questionnaire items

| Second section | ||

| Dimension | Item | Text |

| Level of familiarity with GenAI | F01 | I am familiar with the basic concepts of GenAI (machine learning, neural networks, algorithms, etc.). |

| F02 | I consider myself to have a broad and detailed understanding of how GenAI can be applied in education. | |

| F03 | During my time at university, I had the opportunity to learn more about using GenAI in education. | |

| F04 | I have acquired knowledge of GenAI through online courses, workshops, and self-learning methods. | |

| Perception of the relevance of GenAI in education | R01 | Incorporating GenAI is important for updating teaching and learning methods. |

| R02 | GenAI-based tools have the potential to improve the quality of education. | |

| R03 | Integrating GenAI into education is a current trend. | |

| Practical skills with GenAI tools | S01 | I am confident in my practical skills to use specific GenAI tools in education (educational chatbots, content recommendation systems, etc.). |

| S02 | I have had successful practical experience using GenAI tools in education (educational chatbots, content recommendation systems, etc.). | |

| S03 | I believe I can teach others to use GenAI tools in education (educational chatbots, content recommendation systems, etc.). | |

| S04 | I have shared my practical experience of using GenAI tools in education with others (educational chatbots, content recommendation systems, etc.). | |

| Perceived barriers to the integration of GenAI | B01 | The lack of specific and specialised training is an obstacle to integrating GenAI into education. |

| B02 | Concerns about the privacy and security of personal information act as a barrier to GenAI adoption. | |

| B03 | Some educators' resistance to change is a challenge to the adoption of GenAI. | |

| Confidence in integrating GenAI into teaching | C01 | I am confident in my ability to integrate GenAI into my future classes. |

| C02 | I believe that GenAI can be a valuable teaching tool. | |

| Ethical and social impact of AI in education. | I01 | It is important to consider the ethical implications of GenAI in education, such as bias, privacy, and transparency. |

| I02 | The development of GenAI tools should prioritise inclusivity for all users. | |

| I03 | The adoption of GenAI in education requires the constant evaluation of its ethical and social impact. | |

| Future expectations for GenAI in the field of education | E01 | I expect GenAI to play a central and decisive role in education in the future. |

| E02 | GenAI can be used to tailor learning to the individual needs of each student. | |

| E03 | I intend to pursue further training in utilising GenAI tools and techniques, specifically for educational purposes. | |

| Third section | ||

| Dimension | Item | Text |

| Satisfaction | S01 | Indicate your level of satisfaction with the experience held. |

| Perceived usefulness | U01 | Do you consider Chat GPT to be a good tool to help teachers design LSs? |

| Quality of responses generated | Q01 |

Do you consider the responses obtained with ChatGPT to be mostly: ● Incorrect ● Partially incorrect ● Incoherent ● Coherent ● Incomplete ● Complete ● Partially correct ● Correct |

| Attitude | A01 |

Regarding the answers you obtained from ChatGPT: ● You fully trusted their accuracy and used them. ● You verified the accuracy of the answer by comparing it with other sources and modified it partially. ● You verified the accuracy of the answer by comparing it with other sources and modified it completely. |

| Training | T01 |

If we wanted ChatGPT to be used by teachers, you believe that: ● Students and teachers should receive training on the specific tool and on GenAI in general. ● We could receive a brief introduction to ChatGPT in particular, its context and certain warnings about its use. ● The tool is easy to use and easy to adapt to. No training is necessary. |

Data collection and analysis

Data collection took place between October 2024 and January 2025 with voluntary and anonymous participation and prior informed consent.

Descriptive and relational analyses were carried out to statistically characterise the dimensions evaluated in relation to self-perception and the usefulness of GenAI in education.

Each dimension of the questionnaire was treated as a composite variable, summarised by its mean (μ) and standard deviation (σ), and its normality was assessed using the Shapiro–Wilk test to guide the choice between parametric and non-parametric techniques. Although means and standard deviations are reported, as is common practice in educational research, the relationship between the age of the participants and the different dimensions was analysed using Spearman’s correlation coefficient (ρ), given its robustness, as it does not require normality or linearity assumptions, and its suitability for the type of scale used.

Differences between degree programme groups in the questionnaire dimensions were explored using the Kruskal–Wallis test for independent samples, as the assumptions of normality (Shapiro–Wilk) and homogeneity of variances (Levene) were not met. The results are presented as the median [interquartile range], together with the corresponding p-value for the comparison between groups.

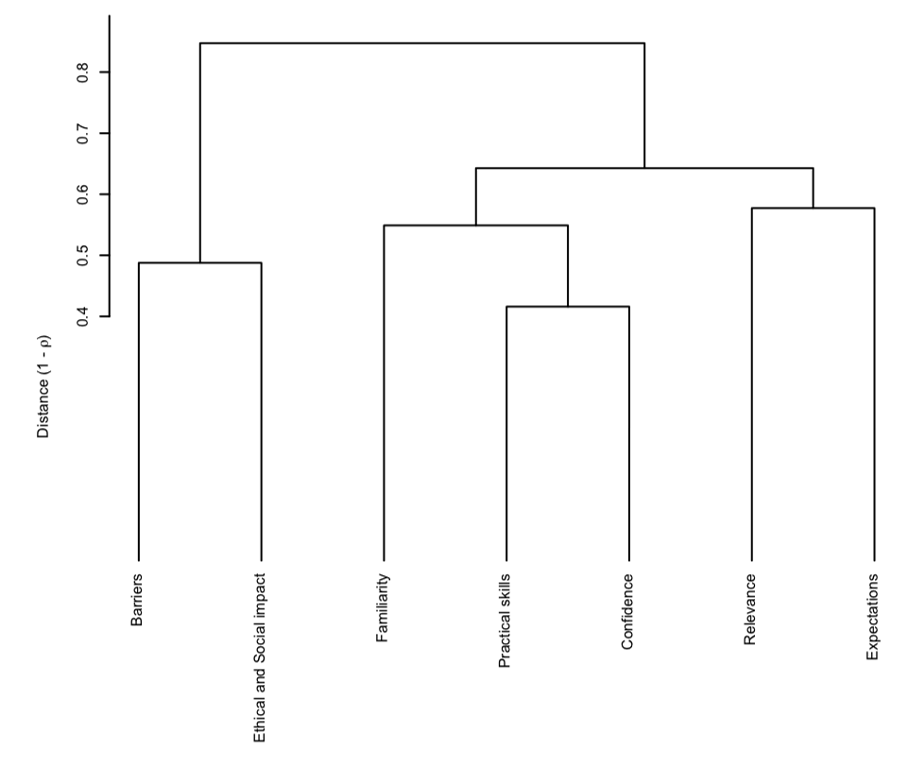

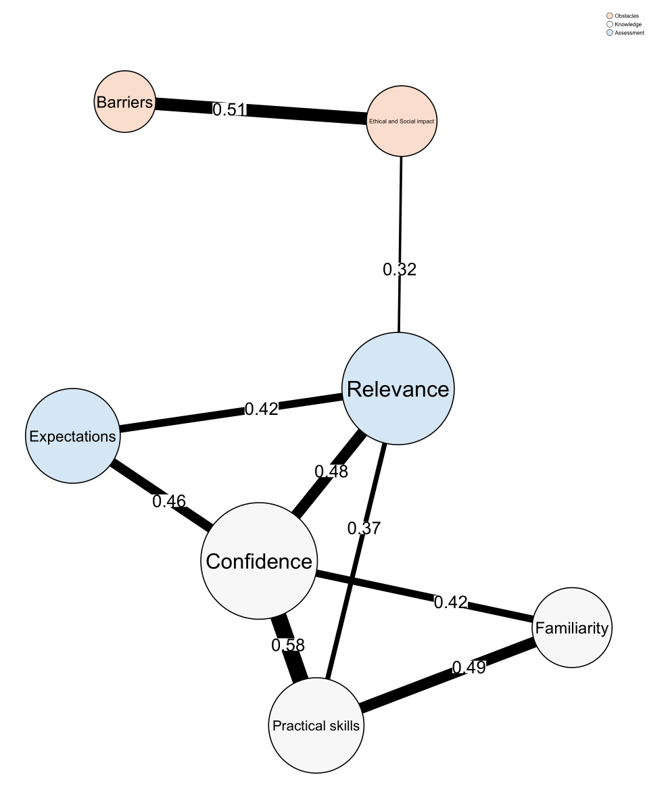

Exploratory and structural representation analyses were performed using networks to visualise the relationships between the questionnaire dimensions. Based on a correlation analysis between composite dimensions, a hierarchical dendrogram was generated using 1 – ρ (Spearman) distances, which allowed identifying conceptual clusters between the dimensions and detecting the existence of interrelated thematic axes in participants’ self-perceptions, such as knowledge, assessment, and barriers. This structure facilitated the visual organization of a correlation network, providing an intuitive and relational representation of the links between the evaluated constructs. All analyses were performed using the free software R (version 4.4.3) (R Core Team, 2024). To promote transparency and reproducibility, the code used for the analyses is available in the OSF repository: https://osf.io/c83dh (Pinto-Llorente et al., 2025). As the dataset contains sensitive student information, it will be made available upon request from the authors.

RESULTS

The results are presented below, organised according to the analyses conducted and the research questions.

RQ1. What is the self-perception of pre-service teachers regarding GenAI in relation to their Familiarity with it, its perceived Relevance, their Practical Skills, the Barriers they face, their Confidence, its Ethical and Social Impact, and their Expectations?

The descriptive results show medium-high levels of self-perception regarding GenAI. A moderate level is observed in the dimensions: Confidence (μ = 3.91; σ = 0.68), Expectations (μ = 3.72; σ = 0.68), Familiarity (μ = 3.56; σ = 0.73), and Practical Skills (μ = 3.56; σ = 0.76) and high levels in Ethical and Social Impact (μ = 4.34; σ = 0.65), Barriers (μ = 4.18; σ = 0.60), and Relevance (μ = 4.08; σ = 0.57).

The associations between the age of participants and the questionnaire dimensions were analysed. The results indicated positive and statistically significant Spearman correlations between age and the dimensions of Relevance (ρ = 0.30; p < 0.01), Expectations (ρ = 0.20; p < 0.01), and Ethical and Social Impact (ρ = 0.40; p < 0.001). Differences in the dimensions related to the self-perception of GenAI were also analysed according to the degree programme that the participants were enrolled in. Significant differences (p < 0.05) were found concerning the Expectations dimension. The master's degree in ICT in Education obtained the highest median (4 [3.67; 4.33]), while the other degrees presented slightly lower values, reflecting moderate variability between groups. Conversely, in the Familiarity dimension, a difference close to the threshold of statistical significance was detected (p = 0.051). Familiarity was lower for the master's degree in Innovation in Specific Teaching Methods (median 3.25 [3; 3.75]) and higher for the bachelor's degree in Early Childhood Education and the master's degree in ICT in Education (both with a median of 3.75). Regarding the Barriers dimension, the differences did not reach statistical significance (p > 0.05), but the master's degree in Innovation in Specific Teaching Methods showed a greater perception of barriers (4.67 [4.33; 4.67]) than the bachelor's degrees and the master's degree in ICT in Education (median 4.0). No significant differences were found concerning the Confidence dimension (p > 0.05). However, the double degree in Education had the lowest median score (3.50 [3.50; 4]), while the other degrees were all around 4.0. No statistically significant differences were observed between degrees with regard to Practical Skills (p > 0.05). The medians remained between 3.25 and 3.75, with no clear pattern emerging. Finally, statistically significant differences (p < 0.001) were observed between degrees in the Ethical and Social Impact dimension. The highest median was obtained by the master's degree in Innovation in Specific Teaching Methods (5 [4.67; 5]), followed by the master's degree in ICT in Education (4.67 [4; 5]), in contrast to the lower values obtained by the undergraduate degrees.

Effect sizes were calculated for each inferential comparison by degree programme. The variable Age (η²_H = 0.678) showed the largest effect size, with a marked difference between programmes. Among the questionnaire dimensions, Ethical and Social Impact (η²_H = 0.142) displayed a moderate effect size. In contrast, dimensions such as Expectations (η²_H = 0.036), Relevance (η²_H = 0.036), and Familiarity (η²_H = 0.032) presented small effect sizes, although consistent with p values close to or below 0.05. Barriers (η²_H = 0.022) and Confidence (η²_H = 0.021) showed even smaller effects, while no relevant effects were observed for Practical Skills (η²_H = –0.003). Regarding the categorical variable Sex, the effect size was moderate (Cramer’s V = 0.372), indicating an unequal distribution across programmes.

The dendrogram generated from Spearman's correlations (Figure 1) showed a clear hierarchical organisation between the evaluated dimensions. A first cluster was identified, called Knowledge, composed of Familiarity, Practical Skills and Confidence. A second cluster, Assessment, grouped the dimensions Relevance and Expectations. Finally, Ethical and Social Impact, and Barriers formed the Obstacles cluster.

Hierarchical grouping of self-perception dimensions on GenAI

The dendrogram was used to represent a network of correlations between the dimensions (Figure 2), which was constructed from a Spearman's correlation matrix. The correlation network represents each dimension as a node. The nodes are arranged in a way that accurately reflects the clusters that emerged from the hierarchical analysis (Knowledge in white, Assessment in blue and Obstacles in orange), thereby reinforcing the empirical validity of the conceptual organisation adopted. The thickness of each line represents the intensity of the correlation.

Centrality metrics of the dimensions in the correlation network were calculated, considering a threshold of |ρ| ≥ 0.3 to define the presence of edges. The measures included degree (number of significant connections), betweenness, and closeness.

The dimensions Relevance and Confidence stood out for having the highest degree (4 connections each), as well as the highest betweenness values (8.5 and 3.0, respectively), indicating that they act as central nodes in the network. Practical Skills also showed moderate connectivity (degree = 3). In contrast, dimensions such as Barriers, Familiarity, and Expectations displayed lower degree and low betweenness, suggesting a more peripheral role.

Correlation network of self-perception dimensions on GenAI in education

RQ2. How do pre-service teachers rate the usefulness of ChatGPT as a support tool for LS design?

ChatGPT was generally assessed very positively as a support tool for LS design. Satisfaction reached a value of 4.45 (σ = 0.68), and Perceived usefulness reached 4.24 (σ = 0.84). The quality of the generated responses was assessed using a dichotomous item (‘Yes’/‘No’). Only the results from participants who responded affirmatively are reported. The majority rated the responses as coherent (n = 82; 66.70%), while a smaller percentage considered them incorrect (n = 20; 16.30%), complete (n = 19; 15.40%), partially correct (n = 17; 13.80%), partially incorrect (n = 14; 11.40%), incomplete (n = 14; 11.40%), or incoherent (n = 7; 5.70%). None were rated as completely correct.

Regarding their Attitude towards the generated responses, the majority (n = 138, 79.30%) indicated that they checked and partially modified the responses. A smaller proportion (n = 27, 15.50%) indicated that they used the responses directly without making any changes. Only a very small group (n = 9, 5.20%) completely rejected the response provided by GenAI.

Consistent with this critical and reflective approach, a significant proportion of the sample (n = 117, 67.20%) stated that training in the use of ChatGPT and other GenAI tools is necessary for teachers and students. A total of 27.60% (n = 48) indicated that such training should be aimed exclusively at teachers, while only 5.20% (n = 9) considered it unnecessary.

Correlations were explored to identify factors associated with a more positive assessment. The results revealed positive and statistically significant correlations between perceived Usefulness of ChatGPT and the following dimensions:

- Confidence (ρ = 0.36; p < 0.001)

- Relevance (ρ = 0.23; p = 0.0019)

- Practical Skills (ρ = 0.20; p = 0.0088)

These correlations suggest that individuals who have greater Confidence, possess better Practical Skills, and consider GenAI to be more relevant in education tend to rate the Usefulness of ChatGPT for LS design more highly.

McDonald’s internal reliability coefficient ω was analysed for the seven dimensions of the second section of the questionnaire. All showed adequate levels of internal consistency: Familiarity (ω = 0.80), Relevance (ω = 0.72), Practical Skills (ω = 0.85), Barriers (ω = 0.70), Ethical and Social Impact (ω = 0.81), and Expectations (ω = 0.74). For the Confidence dimension, composed of only two items, it was not possible to calculate the coefficient. Similarly, the four dimensions of the third section, each consisting of a single item, did not allow its estimation. Regarding the dimension Quality of the generated responses, ω = 0.65 was obtained. The literature considers values between 0.70 and 0.90 acceptable, including values above 0.65 (Ventura-León & Caycho-Rodríguez, 2017).

DISCUSSION

This research focused on understanding pre-service teachers' self-perceptions of GenAI and the usefulness of ChatGPT in designing LSs. Regarding the first research question, the dimensions with the highest levels were Ethical and Social Impact, Barriers, and Relevance, which suggests a critical awareness of GenAI. With respect to Ethical and Social Impact, numerous studies report similar challenges relating to bias, privacy and transparency (Ishmuradova et al., 2025; Lozano & Blanco, 2023; Markos et al., 2024), as well as the lack of access to GenAI, which could exacerbate educational disparities arising from unequal access (Kasneci et al., 2023) and even limit access to knowledge (UNESCO, 2023). Furthermore, Jo (2024) found that privacy concerns are negatively associated with its use. Previous studies also emphasise the need to integrate GenAI into educational contexts as a tool to support teachers and for providing teachers with specific training in its use (Ishmuradova et al., 2025; Lozano & Blanco, 2023; Markos et al., 2024). With regard to the dimension Relevance, previous studies highlight the significant benefits that GenAI can offer for enhancing the teaching and learning process (Ishmuradova et al., 2025; Markos et al., 2024).

The dimensions Confidence, Expectations, Familiarity, and Practical Skills showed moderate levels. These results are consistent with those of Kelly et al. (2023), who found that confidence and familiarity with GenAI are often limited, increasing with experience. Other studies suggest that demonstrating the benefits of using AI chatbots in education can favour their adoption and effective use (Jo, 2024). Regarding Expectations, the results align with other research highlighting the capacity of GenAI to adapt learning to students’ needs, thereby reinforcing its role in current and future education (Ruiz-Rojas et al., 2023; Sánchez, 2024; Sánchez-Prieto et al., 2025). In terms of Practical Skills, the results are consistent with those of Ng et al. (2023), who emphasise that future teachers require further experience to become familiar with, use, and teach with GenAI tools. It should be noted that, given the correlational nature of the study, these relationships do not imply causality.

The research revealed a hierarchical organisation of the dimensions studied, grouped into three clusters: Knowledge, Evaluation, and Barriers regarding GenAI. This organisation can be useful for guiding future initiatives aimed at pre-service teachers, as it provides a clear conceptual framework of their perceptions and needs.

The network results show that the dimensions Relevance and Confidence occupy central positions in the questionnaire structure, acting as key connecting nodes that facilitate articulation between blocks such as Familiarity, Practical Skills, and Expectations. In interpretative terms, this suggests that those who feel confident in integrating GenAI into their teaching practice (high Confidence) and who perceive it as a valuable tool (high Relevance) also tend to show greater prior knowledge, higher practical skills, and greater expectations for future use. Some reports indicate that confidence in GenAI increases the intention to use it (Ivanov et al., 2024). Their articulating role, confirmed by their nodal position in this research, had already been noted as a bridge between favourable attitudes and critical concerns. Overall, the results show a balance between positive attitudes and critical awareness. High scores in Expectations and Relevance coexist with equally high values in Ethical–social impact and practical Barriers. This pattern reflects that respondents recognise the potential of GenAI to improve teaching (Kasneci et al., 2023), without ignoring risks and limitations. The perception of obstacles is moderated by high expectations, indicating an attitude that strikes a balance between well-founded optimism and critical caution.

The inclusion of effect sizes enabled a more precise interpretation of the practical relevance of differences between degree programmes. Although some comparisons did not reach statistical significance, small effect sizes in dimensions such as Expectations, Relevance, and Familiarity suggest subtle but potentially meaningful differences in educational contexts. Clear patterns were observed depending on the type of degree. Undergraduate students showed lower levels of Expectations and Familiarity with GenAI, as well as a slight tendency to perceive more Barriers than master's students. This suggests that postgraduate programmes promote greater confidence and expectations, possibly due to a more advanced curriculum or broader prior experience.

The Ethical and Social Impact dimension stands out in particular, with master's students clearly outperforming undergraduates. Overall, these findings reinforce the need to consider the actual magnitude of differences in order to guide educational proposals tailored to students’ profiles. In undergraduate programmes, it is advisable to foster interest and familiarity, whereas in postgraduate programmes, the focus should be on strengthening a critical and reflective view of GenAI.

Previous studies indicate that familiarity and predisposition towards innovative technologies tend to increase with higher levels of education (Saihi et al., 2025). Therefore, training interventions should be tailored to the profile: in undergraduate programmes, reinforcing motivation and providing early exposure to GenAI; and in postgraduate programmes developing critical and collaborative competences (Saihi et al., 2025; Wang & Wang, 2022). However, this differentiation should be interpreted with caution, as the sample was self-selected and no qualitative triangulation was applied, limiting generalisation, particularly across educational levels. These findings are consistent with previous research that has highlighted the importance of critical digital literacy (Wang et al., 2024) and the need for training support to facilitate the responsible adoption of emerging technologies in education (Bearman & Ajjawi, 2023).

These reflections are particularly relevant in online or hybrid education contexts, where the use of tools such as ChatGPT can support the design and development of LSs, favouring personalisation and pedagogical adaptation in non-face-to-face modalities.

The implications for teacher training are clear: programmes must integrate technical, critical, and ethical components in a balanced way. Technical competences should be developed through practical instruction with tools such as ChatGPT, a basic understanding of its algorithms, and specific teaching activities (Wang & Wang, 2022). Furthermore, critical thinking and digital literacy should be fostered, for example, through training to evaluate the quality of content generated by GenAI and by promoting strategies to verify information and analyse biases (Kasneci et al., 2023). It is also important to encourage ethical and professional reflection through debates and by raising awareness of ethical challenges (privacy, equity, technological dependence), in line with the call for an ethical pedagogical approach to GenAI (Kasneci et al., 2023).

In response to the second research question, high scores were obtained in both Satisfaction and perceived Usefulness with ChatGPT. These results suggest that this technology could play a significant role in lesson planning and content production. A notable finding was the critical attitude adopted by students who mostly chose to verify and adapt the information, thereby demonstrating metacognitive competence in the use of GenAI. This is particularly relevant given that GenAI can generate erroneous or biased responses, confirming that the effective use of ChatGPT also depends on pedagogical and ethical judgement. Furthermore, most participants considered specific training to be necessary, supporting the idea that the integration of GenAI tools requires training support, consistent with previous studies that emphasise the need for ethical, pedagogical, and operational frameworks for the appropriate use of GenAI (Ivanov et al., 2024; Kasneci et al., 2023).

In summary, the emergence of GenAI has generated considerable interest in understanding the needs of pre-service teachers for its effective incorporation into the classroom (Ogunleye et al., 2024). The evidence reveals a positive disposition and awareness of the educational benefits (Buyakova et al., 2024). Whitbread et al. (2025) emphasise the importance of establishing policies to ensure teacher training and to address the associated challenges. This includes AI literacy training programmes that enable teachers to integrate these technologies with confidence and effectiveness (Al-Abdullatif, 2024; Lozano & Blanco, 2023; Omar et al., 2024), promoting an informed and responsible use (Nyaaba & Zhai, 2024; Markos et al., 2024; Okunade, 2024).

CONCLUSIONS

This quantitative study, adopting an evaluative design, aimed to analyse the self-perception of pre-service teachers regarding GenAI and to assess their experience of using ChatGPT in the design of LSs. To this end, a questionnaire was administered after a training activity, which enabled the collection of empirical data on key dimensions, as well as on the perceived usefulness of the tool. The results show a favourable attitude towards its integration, with high levels of Familiarity, Practical Skills, positive Expectations, and appreciation of Ethical and Social Impact. The Confidence dimension emerge as a key structural axis, acting as a link between Knowledge, Attitude, and willingness to use.

Analysis of the correlation network reveals that teacher Confidence reflects not only self-efficacy but also a predisposition towards critically adopting emerging technologies. At the same time, a duality is observed between enthusiasm for the potential of GenAI and awareness of its ethical and practical risks. This highlights the need for training programmes that combine practical training with opportunities for critical reflection. The findings also suggest that the perception of barriers is not primarily associated with technical deficits, but with ethical and pedagogical sensitivities. The peripheral position of this dimension in the network underscores the importance of addressing it through contextualised approaches rather than exclusively through technological training.

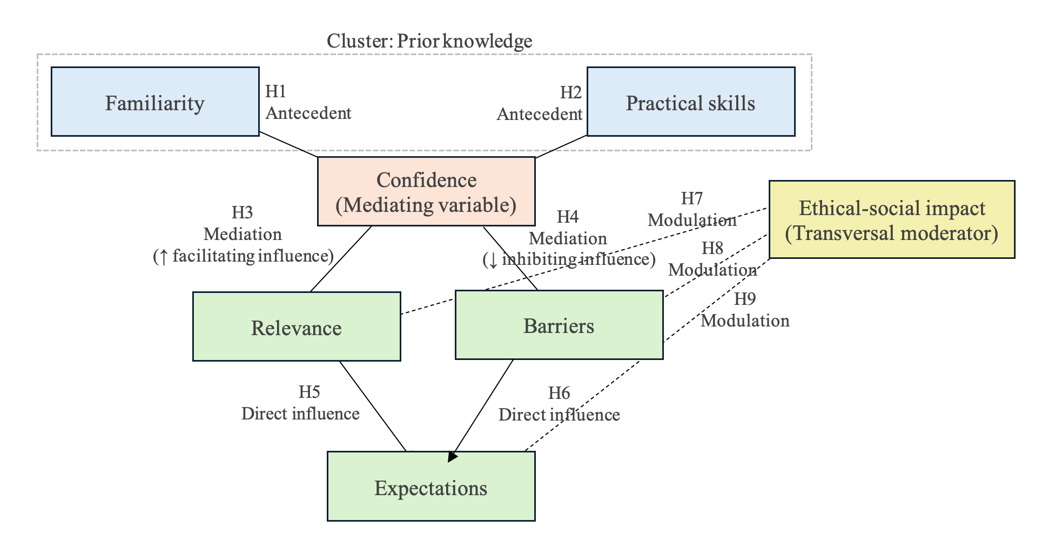

Based on the scientific literature and exploratory findings obtained through network analysis, we propose a conceptual model that articulates the expected relationships among the different dimensions of self-perception of GenAI in pre-service teachers.

In this model, Familiarity and Practical Skills are grouped into a cluster termed Prior knowledge, which acts as an antecedent and facilitates the development of Confidence in the use of GenAI tools. This Confidence is conceived as a central mediating dimension, influencing both the perception of Relevance and a lower perception of Barriers to its implementation. In turn, both influence the construction of future Expectations regarding the educational use of this technology.

Ethical and Social Impact is positioned as a transversal dimension that critically modulates attitudes towards the adoption of GenAI, particularly among those who perceive greater relevance and confidence, but who are also more aware of ethical and social challenges. This critical disposition is associated with a reflective attitude observable in the use of tools such as ChatGPT.

The model anticipates that Confidence operates as an articulating node between prior knowledge and future willingness towards GenAI, whereas Ethical impact acts as a critical regulator of these attitudes.

This model aligns with the Technology Acceptance Model (TAM), centred on perceived usefulness and attitudes towards technology, and with the AI-CFT, which integrates ethical and pedagogical competences for the responsible use of AI. In summary, the model articulates the hypothetical relationships represented in Figure 3.

Conceptual model: Hypothetical relationships among self-perception dimensions of GenAI

Based on these results, five action lines are proposed to effectively integrate GenAI into teacher training: (1) ongoing training that combines technical use and ethical implications; (2) hands-on learning through applied activities; (3) the development of critical thinking; (4) initial support to strengthen teacher confidence; and (5) ongoing evaluation of ethical and social impact. Likewise, institutions are urged to integrate these principles into their policies and curricula, promoting hybrid and collaborative environments that facilitate equitable use of GenAI and strengthen communities of practice for teacher professional development.

GenAI offers a strategic opportunity to transform education, enriching teaching, fostering innovation, and democratising access to knowledge. Its implementation requires profound pedagogical reflection, clear policies, and ongoing training, focusing not only on technological capabilities but also on its pedagogical integration adapted to each context, while promoting a critical digital culture. From this perspective, the findings deepen the understanding of pre-service teachers’ attitudes towards a critical adoption of GenAI and establish a foundation for future teachers to integrate this technology into their classrooms, preparing compulsory-level students for its ethical and creative use.

This study has some limitations. Its cross-sectional design prevents causal inferences, and it relies solely on self-reported data without objective measures of actual use, which may affect accuracy. In addition, it does not include comparisons with other technologies or longitudinal follow-up, limiting the analysis of the specific value of ChatGPT and the evolution of perceptions over time. The sample is not representative of all educational levels, and there is a lack of qualitative triangulation that could provide greater depth.

Therefore, it is recommended that future research adopts longitudinal and inter-institutional designs, integrate mixed methods with qualitative triangulation (interviews, focus groups) and objective measures of use, and systematically compare GenAI with other educational technologies to identify its specific advantages and limitations.

REFERENCES

Al-Abdullatif, A. M. (2024). Modeling teachers’ acceptance of generative artificial intelligence use in higher education: The role of AI literacy, intelligent TPACK, and perceived trust. Education Sciences, 14(11), 1209. https://doi.org/10.3390/educsci14111209

Al-Abdullatif, A. M., & Alsubaie, M. A. (2024). ChatGPT in learning: Assessing students’ use intentions through the lens of perceived value and the influence of AI literacy. Behavioral Sciences, 14(9), 845. https://doi.org/10.3390/bs14090845

Alasadi, E. A., & Baiz, C. R. (2023). Generative AI in education and research: Opportunities, concerns, and solutions. Journal of Chemical Education, 100(8), 2965-2971. https://doi.org/10.1021/acs.jchemed.3c00323

Alba Pastor, C. (2022). Enseñar pensando en todos los estudiantes: El modelo de diseño universal para el aprendizaje (DUA). Ediciones SM.

Aravena Castillo, F. (2013). Developing the collaborative model in the initial formation: The autoperception of the professional performance of the beginner teacher in action. Estudios Pedagógicos, 39(1), 27-44. https://doi.org/10.4067/S0718-07052013000100002

Arias, F. G. (2012). El proyecto de investigación. Episteme.

Bayly-Castaneda, K., Ramírez-Montoya, M.-S., & Morita-Alexander, A. (2024). Crafting personalized learning paths with AI for lifelong learning: A systematic literature review. Frontiers in Education, 9, Article 1424386. https://doi.org/10.3389/feduc.2024.1424386

Bearman, M., & Ajjawi, R. (2023). Learning to work with the black box: Pedagogy for a world with artificial intelligence. British Journal of Educational Technology, 54(5), 1160-1173. https://doi.org/10.1111/bjet.13337

Buyakova, K. I., Dmitriev, Ya. A., Ivanova, A. S., Feshchenko, A. V., & Yakovleva, K. I. (2024). Students’ and teachers’ attitudes towards the use of tools with generative artificial intelligence at the university. The Education and Science Journal, 26(7), 160-193. https://doi.org/10.17853/1994-5639-2024-7-160-193

Carranza Alcántar, M. del R., Macías González, G. G., Gómez Rodríguez, H., Jiménez Padilla, A. A., & Jacobo Montes, F. M. (2024). Percepciones docentes sobre la integración de aplicaciones de IA generativa en el proceso de enseñanza universitario. REDU. Revista de Docencia Universitaria, 22(2), 21-40. https://doi.org/10.4995/redu.2024.22027

Currie, G. M. (2025). Generative artificial intelligence in nuclear medicine education. Journal of Nuclear Medicine Technology, 53(1), 72-79. https://doi.org/10.2967/jnmt.124.268323

Denny, P., Prather, J., Becker, B. A., Finnie-Ansley, J., Hellas, A., Leinonen, J., Luxton-Reilly, A., Reeves, B. N., Santos, E. A., & Sarsa, S. (2024). Computing education in the era of generative AI. Communications of the ACM, 67(2), 56-67. https://doi.org/10.1145/3624720

Espinoza-San Juan, J., Raby, M. D., & Sagredo-Lillo, E. (2024). Validación de un cuestionario sobre las percepciones y usos de la IA-Gen entre estudiantes de pedagogía. RISTI: Revista Ibérica de Sistemas e Tecnologias de Informação, (70), 574-585.

Gozalo-Brizuela, R., & Garrido-Merchán, E. E. (2024). A survey of generative AI applications. Journal of Computer Science, 20(8), 801-818. https://doi.org/10.3844/jcssp.2024.801.818

Haroud, S., & Saqri, N. (2025). Generative AI in higher education: Teachers’ and students’ perspectives on support, replacement, and digital literacy. Education Sciences, 15(4), 396. https://doi.org/10.3390/educsci15040396

Hernández-Sampieri, R., & Mendoza-Torres, C. P. (2023). Metodología de la investigación. McGraw-Hill.

Ishmuradova, I. I., Zhdanov, S. P., Kondrashev, S. V., Erokhova, N. S., Grishnova, E. E., & Volosova, N. Y. (2025). Pre-service science teachers’ perception on using generative artificial intelligence in science education. Contemporary Educational Technology, 17(3), ep579. https://doi.org/10.30935/cedtech/16207

Islam, G., & Greenwood, M. (2024). Generative artificial intelligence as hypercommons: Ethics of authorship and ownership. Journal of Business Ethics, 192(3), 659-663. https://doi.org/10.1007/s10551-024-05741-9

Ivanov, S., Soliman, M., Tuomi, A., Alkathiri, N. A., & Al-Alawi, A. N. (2024). Drivers of generative AI adoption in higher education through the lens of the theory of planned behaviour. Technology in Society, 77, 102521. https://doi.org/10.1016/j.techsoc.2024.102521

Jo, H. (2024). From concerns to benefits: A comprehensive study of ChatGPT usage in education. International Journal of Educational Technology in Higher Education, 21, 35. https://doi.org/10.1186/s41239-024-00471-4

Kasneci, E., Sessler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F., Gasser, U., Groh, G., Günnemann, S., Hüllermeier, E., Krusche, S., Kutyniok, G., Michaeli, T., Nerdel, C., Pfeffer, J., Poquet, O., Sailer, M., Schmidt, A., Seidel, T., … Kasneci, G. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences, 103, 102274. https://doi.org/10.1016/j.lindif.2023.102274

Kelly, A., Sullivan, M., & Strampel, K. (2023). Generative artificial intelligence: University student awareness, experience, and confidence in use across disciplines. Journal of University Teaching & Learning Practice, 20(6). https://doi.org/10.53761/1.20.6.12

Laupichler, M. C., Aster, A., Schirch, J., & Raupach, T. (2022). Artificial intelligence literacy in higher and adult education: A scoping literature review. Computers and Education: Artificial Intelligence, 3, 100101. https://doi.org/10.1016/j.caeai.2022.100101

Lozano, A., & Blanco Fontao, C. (2023). Is the education system prepared for the irruption of artificial intelligence? A study on the perceptions of students of primary education degree from a dual perspective: Current pupils and future teachers. Education Sciences, 13(7), 733. https://doi.org/10.3390/educsci13070733

Markos, A., Prentzas, J., & Sidiropoulou, M. (2024). Pre-service teachers’ assessment of ChatGPT’s utility in higher education: SWOT and content analysis. Electronics, 13(10), 1985. https://doi.org/10.3390/electronics13101985

Miao, F., & Cukurova, M. (2024). AI competency framework for teachers. UNESCO. https://doi.org/10.54675/ZJTE2084

Moorhouse, B. L., & Kohnke, L. (2024). The effects of generative AI on initial language teacher education: The perceptions of teacher educators. System, 122, 103290. https://doi.org/10.1016/j.system.2024.103290

Moorhouse, B. L., Wan, Y., Wu, C., Kohnke, L., Ho, T. Y., & Kwong, T. (2024). Developing language teachers’ professional generative AI competence: An intervention study in an initial language teacher education course. System, 125, 103399. https://doi.org/10.1016/j/system.2024.103399

Mouta, A., Torrecilla-Sánchez, E. M., & Pinto-Llorente, A. M. (2025). Comprehensive professional learning for teacher agency in addressing ethical challenges of AIED: Insights from educational design research. Education and Information Technologies, 30, 3343-3387. https://doi.org/10.1007/s10639-024-12946-y

Ng, D. T. K., Leung, J. K. L., Su, J., Ng, R. C. W., & Chu, S. K. W. (2023). Teachers’ AI digital competencies and twenty-first century skills in the post-pandemic world. Educational Technology Research and Development, 71(1), 137-161. https://doi.org/10.1007/s11423-023-10203-6

Nyaaba, M., & Zhai, X. (2024). Generative AI professional development needs for teacher educators. Journal of AI, 8(1), 1-13. https://doi.org/10.61969/jai.1385915

Ogunleye, B., Zakariyyah, K. I., Ajao, O., Olayinka, O., & Sharma, H. (2024). A systematic review of generative AI for teaching and learning practice. Education Sciences, 14(6), 636. https://doi.org/10.3390/educsci14060636

Okunade, A. I. (2024). The role of artificial intelligence in teaching of science education in secondary schools in Nigeria. European Journal of Computer Science and Information Technology, 12(1), 57-67. https://doi.org/10.37745/ejcsit2013/vol12n15767

Omar, A., Shaqour, A. Z., & Khlaif, Z. N. (2024). Attitudes of faculty members in Palestinian universities toward employing artificial intelligence applications in higher education: Opportunities and challenges. Frontiers in Education, 9, 1414606. https://doi.org/10.3389/feduc.2024.1414606

Pinto-Llorente, A. M., Izquierdo-Álvarez, V., & Dolcet-Negre, M. M. (2025). Autopercepción y utilidad de la inteligencia artificial generativa en docentes en formación [Open Science Framework].https://osf.io/c83dh

R Core Team. (2024). R: A language and environment for statistical computing (Version 4.4.3) [Computer software]. R Foundation for Statistical Computing. https://www.r-project.org/

Ruiz-Rojas, L. I., Acosta-Vargas, P., De-Moreta-Llovet, J., & Gonzalez-Rodriguez, M. (2023). Empowering education with generative artificial intelligence tools: Approach with an instructional design matrix. Sustainability, 15(15), Article 11524. https://doi.org/10.3390/su151511524

Saihi, A., Ben-Daya, M., & Hariga, M. (2025). The moderating role of technology proficiency and academic discipline in AI-chatbot adoption within higher education: Insights from a PLS-SEM analysis. Education and Information Technologies, 30, 5843-5881. https://doi.org/10.1007/s10639-024-13023-0

Sánchez Vera, M. del M. (2024). La inteligencia artificial como recurso docente: Usos y posibilidades para el profesorado. Educar, 60(1), 33-47. https://doi.org/10.5565/rev/educar.1810

Sánchez-Prieto, J. C., Izquierdo-Álvarez, V., del Moral-Marcos, M. T., & Martínez-Abad, F. (2025). Inteligencia artificial generativa para autoaprendizaje en educación superior: Diseño y validación de una máquina de ejemplos. RIED-Revista Iberoamericana de Educación a Distancia, 28(1), 59-81. https://doi.org/10.5944/ried.28.1.41548

Storey, V. C., Yue, W. T., Zhao, J. L., & Lukyanenko, R. (2025). Generative artificial intelligence: Evolving technology, growing societal impact, and opportunities for information systems research. Information Systems Frontiers.https://doi.org/10.1007/s10796-025-10581-7

Tai-Han, L., Hsing-Yi, C., Ming-Jr, J., Chih-Kai, C., Cherng-Lih, P., Guo-Shiou, L., Jyh-Cherng, Y., Ming-Shen, D., Cheng-Ping, Y., & Hung-Sheng, S. (2024). An advanced machine learning model for a web-based artificial intelligence-based clinical decision support system application: Model development and validation study. Journal of Medical Internet Research, 26, e56022. https://doi.org/10.2196/56022

UNESCO. (2023). Education 2030 agenda.https://www.unesco.org/en/digital-education/artificial-intelligence

Ventura-León, J. L., & Caycho-Rodríguez, T. (2017). El coeficiente omega: Un método alternativo para la estimación de la confiabilidad. Revista Latinoamericana de Ciencias Sociales, Niñez y Juventud, 15(1), 625-627.

Wang, K., Ruan, Q., Zhang, X., Fu, C., & Duan, B. (2024). Pre-service teachers’ GenAI anxiety, technology self-efficacy, and TPACK: Their structural relations with behavioral intention to design GenAI-assisted teaching. Behavioral Sciences, 14(5), 373. https://doi.org/10.3390/bs14050373

Wang, Y. Y., & Wang, Y. S. (2022). Development and validation of an artificial intelligence anxiety scale: An initial application in predicting motivated learning behavior. Interactive Learning Environments, 30(4), 619-634. https://doi.org/10.1080/10494820.2019.1674887

Whitbread, M., Hayes, C., Prabhakar, S., & Upsher, R. (2025). Exploring university staff’s perceptions of using generative artificial intelligence at university. Education Sciences, 15(3), 367. https://doi.org/10.3390/educsci15030367

Zhang, C., & Villanueva, L. E. (2023). Generative artificial intelligence preparedness and technological competence. International Journal of Education and Humanities, 11(2), 164-170. https://doi.org/10.54097/ijeh.v11i2.13753

Reception: 01 July 2025

Accepted: 18 August 2025

OnlineFirst: 14 October 2025

Publication: 01 January 2026