Monográfico

E-Guess: Usability Evaluation for Educational Games

E-Guess: Evaluación de usabilidad para juegos educativos

E-Guess: Evaluación de usabilidad para juegos educativos

RIED. Revista Iberoamericana de Educación a Distancia, vol. 24, núm. 1, 2021

Asociación Iberoamericana de Educación Superior a Distancia

Recepción: 10 Junio 2020

Aprobación: 19 Agosto 2020

How to reference this article: Campos da Silveira, A., Ximenes Martins, R., & Oliveira Vieira, E. A. (2021). E-Guess: Usability Evaluation for Educational Games. RIED. Revista Iberoamericana de Educación a Distancia, 24(1), pp. 245-263. doi: http://dx.doi.org/10.5944/ried.24.1.27690

Abstract: Usability is a relevant aspect in the analysis of the human-machine interface, as it concerns the dialogue established between subjects, artefacts and quality of use, and interaction allowed by the system. This work presents a specific heuristic for evaluating educational games, created from Game User Experience Satisfaction (GUESS) and, concomitantly, Nielsen's assessment tools. To this purpose, applied research using a quantitative-qualitative approach - with the participation of 3 specialized users and 4 potential users in an educational game used as a case study. The choice of GUESS as a starting point was due to a systematic review of the usability literature. Based on the model, users were invited to operate the educational game and present their impressions. From the selected results, a new use assessment tool, called E-GUESS, was formulated. With Educational-GUESS, we introduced changes aimed at pedagogical issues and educational content that also seeks to elucidate important points in the development of an educational game by allowing insights that are easily ignored during the design phase to overcome the alleged bipolarity between "fun" and "educational" in educational software games. Another contribution of the research was the usability analysis performed for the educational game used in data collection. This game, which deals with the theme Periodic Table of Chemistry and is in the validation phase, received valuable contributions for adjustments in its gameplay.

Keywords: educational games, heuristic method, evaluation, educational technology, computer games.

Resumen: Usabilidad es un aspecto relevante en el análisis de la interfaz hombre-máquina, ya que se trata del diálogo que se establece entre sujetos, artefacto y calidad de uso e interacción que permite el sistema. Este trabajo presenta una heurística específica para la evaluación de juegos educativos, creada a partir de Game User Experience Satisfaction (GUESS) y, concomitantemente, las herramientas de evaluación de Nielsen. Para ello, se realizó una investigación aplicada con un enfoque cuantitativo-cualitativo - con la participación de 3 usuarios especializados y 4 usuarios potenciales en un juego educativo utilizado como estudio de caso. La elección de GUESS como punto de partida se debió a una revisión sistemática de la literatura sobre usabilidad. Según el modelo, se invitó a los usuarios a operar el juego educativo y presentar sus impresiones. A partir de los resultados seleccionados, se formuló una nueva herramienta de evaluación de uso, denominada E-GUESS. Con Educational-GUESS, introdujimos cambios dirigidos a temas pedagógicos y contenidos educativos que también busca dilucidar puntos importantes en el desarrollo de un juego educativo al permitir percepciones que son fácilmente ignorados durante la fase de diseño con la intención de superar la supuesta bipolaridad entre "divertido" y "educativo" en los juegos de software educativo. Otro aporte de la investigación fue el análisis de usabilidad realizado para el juego educativo utilizado en la recolección de datos. Este juego, que trata sobre el tema Tabla Periódica de Química y se encuentra en fase de validación, recibió valiosos aportes para ajustes en su jugabilidad.

Palabras clave: juegos educativos, método heurístico, evaluación, tecnología educacional, juego de ordenador.

In our age of information and innovation, societies demand continuous educational institutions and systems to improve and adapt the way of preparing new student skills so that they can benefit, in the best way possible, from socio-cultural and economic conditions. School, being seen as a formation and social insertion institution, must commit itself to accompany a society in which prepare its students. Changes in society to an information age generate new requirements and require 'additional training' for professionals in the field who increasingly demand research on integration between technological resources and educational content. Teacher training programs are involved in teaching and modelling practices for integrating digital information and communication technologies (DICTs) into student training processes. More than 75% of university professors believe that the use of DICTs is crucial for a discipline that they teach and that it is likely to improve the quality of teaching and learning (Heinecke & Adamy, 2010).

However, in this process, there is a generational rupture, with young people from the digital age increasingly distant from the behavioral and cultural standpoint of previous generations. If, on the one hand, teachers believe that digital technologies can be mediating instruments implemented in teaching, on the other, studies indicate that young people do not use information technologies for educational purposes accordingly, making it a necessity to solve this problem in overcoming the so-called cultural and educational division between teachers and students (Azevedo et al., 2018). This is a fundamentally new approach that prepares teachers as active motivators and organizers of educational processes where the use of DICTs has a wide application.

It is clear, then, that schools are in the midst of a need for transformation, seeking to include DICTs in classrooms. That is, we are in the middle of a process of adapting general-purpose technologies to the school environment (for example, computers, software and smart phones). Even video games, for example, are no longer restricted to entertainment, being gradually included, with relative success, for educational purposes (Hawlitschek & Joeckel, 2017; Freitas, 2017).

The difficulties in applying these technologies are found in several areas, such as in the development phase, with the absence of software engineering procedures and methods for Educational Games that are at a higher level of complexity when compared to the development of conventional commercial software. Such complexity can compromise the ability to develop truly playful games. Another relevant issue is the absence of a method for evaluating and validating educational games that can also serve as guidance on how to develop and apply games in the classroom.

Both the complexity of building educational games, and the difficulty of adapting to the school context have a common point: no evaluation of its usability, as we can assert, identification of its playful potential, teach potential and satisfaction level. In a literature review on the usability evaluation of educational games, Vieira, Silveira & Martins (2019) did not identify a consensus on the evaluated methods, in addition to the fact that most of the proposed models are shown only in Nielsen's heuristics. In the absence of specific criteria for educational games, the authors detected tools that respond to the scale of satisfaction and use of games. However, it is observed that the tools elected have low practical use because they were built for limited purposes and applications. (Vieira et al., 2019).

Such difficulties in introducing games in classrooms reflect, in academic terms, in the lower than expected application given its potential. Yeni & Cagiltay (2017) identified that the integration with educational content in classrooms does not guarantee that the game is effective in terms of entertainment, motivation and in fulfilling its educational or commercial objectives, even when recognizing that they have potential to do so.

Multidisciplinary and methodology issues are fundamental to establish lines of research in this field, with the absorption of analyses and meta-analyses of a large amount of data combined with the qualitative methods established in education, such as content analysis, case studies and ethnology with other approaches, such as neurological studies and social network analysis, to provide a level of granularity that supports the best learning design and the best student experience, through the modelling of social behaviors.

As a contribution to this research field, we seek to develop a heuristic for usability evaluation of educational games from an existing one applied exclusively to electronic games in general. To propose the new heuristic for educational games, we used as an experimentation base an educational game under development by a research group from a public university in Minas Gerais, Brazil with support from the Minas Gerais State Research Support Foundation (Fundação de Amparo à Pesquisa do Estado de Minas Gerais, FAPEMIG). As previously shown by our studies (Vieira, Silveira & Martins, 2019), there is a disconnection between how games are made and the results in practical applications. This reflective narrative is motivated by our experiences both in the application of educational games and in their development. As Whitson (2020, p. 269) states “[we] enter the field by writing about games and gamers but […] we are increasingly asked to become “theorist-practitioners” and teach others to make games.”. What we are trying to accomplish in this research is to formulate a tool and method to develop and evaluate games that are fundamentally educational, overcoming the "playful / educational" dichotomy present in the development of educational games (Viera et al., 2019; Czauderna & Guardiola, 2019; Garcia-Ruiz et al., 2020).

This study is justified by the relevance of developing and evaluate digital educational games that do not cause an overload of cognitive work to the user and that meet the basic usability criteria of an educational software. For this matter, we focus on video game testing, as is an important topic in game design and development because it includes quality assurance tests who look for game software errors and reproduction tests who evaluate gameplay and analyze how fun the game is or should be. In short, testing is a valuable activity carried out in game development projects, because it can evaluate user interface, interaction design, gameplay and software problems (Garcia-Ruiz et al., 2020).

According to ABNT (2002) and other international standards such as ISO 9126 (Barbacci et al., 1995) and IEEE 730-2014 (2014), the product must meet specific objectives with effectiveness, efficiency and satisfaction in a specific context of use. In other words, it needs to meet the educational criteria proposed in relation to the potential for fun to involve the player, the mechanics of the game, its internal and formal structure, such as codes, algorithms, database, usability of the interface, the object layer, tools and interactions need to obey certain ergonomic criteria of quality and user comfort, in addition to responding to its first objective, that is, the educational potential.

Literature Review

In Video Games and Learning, Squire (2011) presents, from personal experience, several video games and the relationships they have created with students. The author's knowledge and achievements permeate his examples from various games and their impact on social interactions, learning communities and culture. Squire starts with the question: why study video games? The author assumes that the study of games can contribute enormously in the educational search to reach the student of the digital age, agreeing that games have a unique potential to teach and learn, unlike any other way. The author also argues that playing enables the participant's intellectual and social growth in the long term and permeates his learning repertoire and states that the educational content, overlapping goals, continuous problem solving, social interactions and game cultures are critical aspects of learning through games. However, he states that: "whenever we let a child learn, instead of arousing his intellectual curiosity, we fail" (p. 15).

Currently, video games are considered tools to support student learning in classrooms (Junior, 2006; Ray, Powell & Jacobsen, 2014). Included in this category and in the field of technology-mediated education are the Educational Games, which include the Serious Educational Games (SEG), Educational Simulations educational simulations (ES) and the Serious Games or Serious Games (Lamb et al, 2018). Serious games are seen as effective in school education, although some studies come to negative conclusions (Zhonggen, 2019). Among the negative points are the difficulty in developing and producing specific games for educational purposes, as well as their complex practical application, which cannot be overlooked.

Ray, Powell & Jacobsen (2014), when analyzing teachers’ receptivity to use video games in classrooms, identified a high acceptance in relation to the ability of games to promote visual teaching (97% approval), and effectiveness when used as role plays and simulations (80% approval), but low acceptance in its ease of application (34% consider games easy to be applied).

In a literature review carried out by Freitas (2017), a series of difficulties were found related to measuring the effectiveness of educational games, although the final result was positive. Among the difficulties encountered is the dispersion of literature on different topics, with themes and scientific jargons not always accessible to all researchers, as they are scattered across multiple different academic fields. This is due to an implicit characteristic of educational games development, as it covers areas from computing and systems, cinema, design, pedagogy and content related to the educational area that the game addresses. In addition to its interdisciplinarity, game-based approaches require mixed study groups, including people who master the educational content, information technology themes and teaching models that can be problematic for conventional education systems.

Still according to Freitas (2017), the advancement of educational games is a challenge for educational institutions, public policies and for professionals, both in the area of technology and pedagogy. However, with the growing evidence base, advances in quality and overcoming challenges can be made. Despite the resistance of adopting such approaches, it will be a matter of time, as it was for other technologies for games to establish themselves firmly within educational organizations. With the traditional learning paradigm making room for new approaches, game-based learning becomes more common and the incorporation of these artefacts into educational practices expands.

The expanding application of digital games is part of the changing contexts brought by digital information and communication technologies in access to knowledge. According to Levy (2003), this process allows social groups to develop collective intelligence. However, if on the one hand we have a significant contribution to the advancement of information sharing and collaborative knowledge construction practices, on the other we have to deal with an information overload (Kielgast & Hubbard, 1997) of the most varied types and forms.

For Sweller (2002), until now, the information we had is that the human cognitive architecture would not be prepared to process much information simultaneously, since when this occurs there is an overload in the working memory. Thus, the design and the way information are organized in the virtual interfaces environments interferes in the process of understanding and retaining information.

The communication established in a virtual environment is based on images, textual or audiovisual materials. The relationship between the subject and the transposed content takes place through an intentional symbolic mediation. For Vygotsky (apud Smolka, 2000) it is the signs, socially constituted and internalized by man, that allows us to communicate the meaning we want to give to the discourse. In other words, they are elements that we use as mediating instruments. These same signs are used as scaffolding (Bruner, 2009) in the process of developing skills.

Serious games have proliferated in the last decade, with useful benefits identified in several researches (Freitas, 2017; Lope et al., 2017), but in order to establish itself as a learning strategy, the elements discussed above should be taken into account. However, there is an absence of consolidated methodological studies and proposals for the development of educational games due to an inherent multidisciplinary that increases the level of complexity of the studies in this sub-field.

Despite the impact of video games on contemporary society and their value by supporting and enriching the teaching and learning process of children and adolescents, there are currently few specific methodologies for the development of educational games. Two deficiencies were identified by Lope et al. (2017) as critical for current development frameworks:

- 1. Methodologies for designing educational video games do not provide exhaustive tools or procedures for designing and evaluating the quality and elements that the game works with. For example, the history of a game as an axis of support and enhancement of mechanisms and rules of the game, is almost never discussed.

- 2. Such methodologies also ignore the multidisciplinary nature of the team that develops a video game, which goes far beyond developers and computer programmers.

In the quest to contribute to this fertile study environment, this work cooperates in the areas of pedagogy, educational technologies and computer systems development, engineering and software quality. Based on an educational software in development, we seek to develop an effective methodology for the evaluation phase in the development process itself, so that some flaws are identified and overcome, as well as collaborating both for the identification of structural errors and bugs, and for the measurement of level of satisfaction and effectiveness of an educational game in the classroom.

Instead of adopting the ADDIE method - Analysis (Analysis), Design (Development), Development (Implementation), Implementation (Evaluation) - or cascading (Allen, 2006), as this can bring refactoring problems, since usability evaluation is usually performed by the end of the game's development, we opted for the modular assessment approach (Busch et al., 2015; Hermawati y Lawson, 2016) as it allows iterative and comprehensive development, both in terms of usability / user experience and portability of mechanics for other projects. A modular approach is then adopted for heuristics so that one module is dedicated to identifying usability problems that are likely to be encountered using general heuristics and another module is dedicated to identifying domain-specific usability problems.

Methodology

This research, of applied characteristic and mixed approach (quanti-qualitative), was carried out based on a systematic review of the literature on usability already published (Vieira, Silveira & Martins, 2019). In the systematic review, several methods of conducting a usability test were identified; Lauesen (2005) highlights four most relevant, low cost and highly efficient from which two were selected.

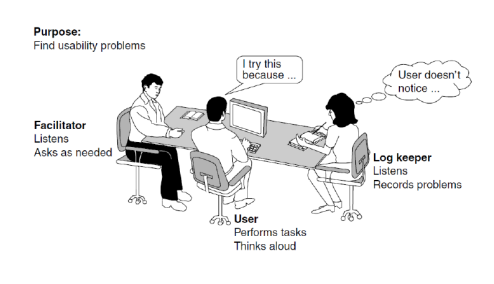

The first one, the Think-aloud Test. In it, the user (test subject or test user) must perform tasks using the system or a mock up and describe (preferably aloud) what he is doing and why. The second is that of the Test Team, best conducted by two or three people, including a facilitator, who talks to the user and guides him, the rapporteur, who notes what is happening, in particular the problems encountered by users and finally, a third person who watches how the test evolves and helps the other two if a need arises.

Once the usability test methods were identified, data collection was organized in two phases: in the first, the method adopted was a merger of the Think-aloud Test with the Test Team: the user, accompanied by a facilitator and a reporter, interacted with the educational game, being encouraged to explain, aloud, every action performed while playing. The entire process was recorded using a voice recorder and cameras, as shown in Figure 1.

Source: Authors, 2020

The evaluations were carried out by two different sets of users: the first consisting of three specialist users, university professors from different areas of knowledge: Education and Pedagogy, Chemistry and Computer Science. The choice was made by the nature of the game to be tested, in this case a virtual RPG for teaching the Periodic Table of chemical elements. This strategy is recommended by Nielsen, who indicates that carrying out tests with expert users it is a factor in improving the quality of data obtained on the human-machine interface. In addition, it is a recommended strategy to capture recommendations on the playfulness and content of the game to be evaluated. A second group of users also performed the test. This group consisted of four potential users and was composed of non-specialist participants in the areas related to the educational game, representing the end users. The choice of two groups of users followed the recommendation of the Nielsen Group1, which argues that there is no reason to interview more than three users from different groups, with five being the ideal number: "the best results come from the test of no more than 5 users and running as many small tests as you can afford." (Nielsen et al., 2000, para. 1).

All participants had an initial moment of free exploration of the game, with encouragement for verbalization. The equipment available to the testers were desktops Intel® Core™ i5 4440, HD Intel® 4600 graphic card and 4GB RAM with keyboard and mouse in an acoustically isolated room. They then undertook a guided exploration in the game environments, supported by a check-list, also encouraged to verbalize what they were doing. The entire evaluation was recorded on video by two cameras, one placed behind and another in front of the users in order to follow the user's progress with the game and to record their body and facial expressions. Each video was subsequently analyzed, taking as its starting point the path taken by the user and the premise of Nielsen's usability principles. During the analysis of the behavior and verbalizations of each participant, the notes taken during the observation of the reporter were added to verify the difficulties, facilities and errors of the system during the guided exploration of the game.

After each evaluation session, a Nielsen questionnaire with 19 questions containing the 10 Usability Heuristics Applied to Video Games (Joyce, 2019) alongside questions about the game itself was applied to identify usability and educational content problems. The following scale from 0 to 4 was used: "cosmetic problem" (low gravity), minor usability problem (medium gravity), main usability problem (high gravity) and at its highest scale, usability catastrophe (very high gravity), in addition to an option 'I don't know how to answer'. All data was collected between July and December of 2019.

In the second phase of the work, a specific heuristic was developed to evaluate the usability of educational games using as parameters the analysis of the data collected in the first phase and the GUESS tool (Phan, Keebler & Chaparro, 2016). From the data triangulation: the recordings and notes made during the evaluations, added to the information collected by the Nielsen questionnaire and the evaluation parameters proposed by the GUESS tool, the Educational Game User Experience Satisfaction Scale (E-GUESS) was developed.

Results and Discussion

Fisch (2005) argues that perhaps one of the biggest impacts generated by an educational game occurs 'off-line', long after the computer is turned off. Computer games can provide an interesting context for the introduction of new concepts, topics and skills that children can continue to explore later on through readings, discussions or off-line activities. However, guaranteeing these benefits is a problem: how to validate and evaluate an educational game? Software measurements are considered important to improve the software process. A common feeling expressed by those who try to break free from the bad aspects of software design (and games) is: "Ask the user for their opinion" (Root & Draper, 1983). Based on this premise, in this section we will describe the results of the analyses we carried out based on "user opinions" and how we apply the knowledge to organize an instrument that allows us to evaluate educational games (E-GUESS), using the GUESS questionnaire (Phan, Keebler & Chaparro, 2016).

Gunther (2003), in his work on how to design a questionnaire, argues that there are three ways to understand human behavior: (1) observe the behavior that occurs naturally in the real world; (2) creating artificial situations and observing the behavior before tasks defined for those situations; (3) asking people about what they do (did) and think (thought). Each of the three techniques to conduct empirical studies and observation, experiment and survey have advantages and disadvantages. We direct our way of collecting the data to satisfy at least two of the three paths: we create an artificial situation and observe the behavior of evaluators before tasks; and ask what they did and thought.

When developing the research that led to the development of the E-GUESS, two principles that Gunther (2003) addresses were also taken into account: conceptual basis, which will determine the concepts to be investigated and the target population, or sample. The instrument developed has a dual function: game evaluation tool and support framework for game development. The target audience was, as stated in the description of the methodology, divided into two samples, potential users and expert users. As expected, each interviewee issued comments according to their experience and area of expertise, which was confirmed in the analysis of the interviews. Experts in chemistry, education and computing verbalized comments more aligned to their area of expertise. The seven evaluators found or reported 100 problems (put the exact number) in total. On average, each evaluator found 15 problems.

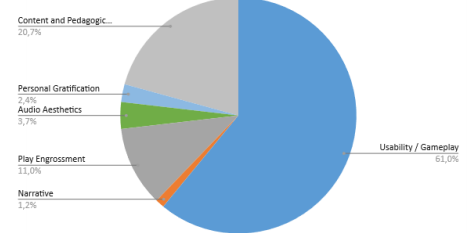

After the initial analysis, we found that unique problems were identified by different evaluators, most of them being equivalent to the Usability/Gameplay category of GUESS. To have an overview of the types and severity of problems, we used Nielsen's five-point severity rating scale (1994). Not all categories presented by GUESS were identified through the evaluations, just as new categories were also identified that were not originally covered by that instrument.

The application results of the severity rating scale showed that among the problems identified by Heuristic Assessment, there were 2 errors of very high severity, both linked to the category of Usability/Gameplay, 11 problems of high severity, around 29 problems of less serious and 44 minor problems.

Source: Authors, 2020

During the evaluation, the most easily identifiable gameplay problems were related to the game's usability and mobility. In particular, were manifested problems with information display and navigation difficulties. If, on the one hand, evaluating these aspects in games is similar to normal usability assessments of utility software, evaluating the gameplay of a game in a Serious Game is more complex. If we consider gameplay as an educational characteristic of a game, we can consider that the main objective of a serious game is to be educational, to teach someone, with all the elements incorporated designed to promote learning. That said, the overall educational gameplay of a game comes from the value of each attribute in the different gameplay characteristics presented. It must be adequate enough that a player's experiences and feelings when playing are as good as possible and best suited to the educational nature of the game. What was discovered at this stage of the research was the intrinsic problem of adapting gameplay with educational potential, confirming Czauderna & Guardiola (2019) statement “The field of game design for educational content lacks a focus on methodologies that merge gameplay and learning. […] they neglect the unfolding of gameplay through players’ actions over a short period of time as a significant unit of analysis; they lack a common consideration of game and learning mechanics; and they falsely separate the acts of playing and learning.” (p. 207)

One point to be taken into account is that certain factors analyzed by usability assessments are not well explored individually and academically. In this regard, there are even fewer references when treated in educational games: what is good gameplay? What is the role of gameplay in the teaching-learning process? What is the commitment of a good narrative in the teaching of educational content? Can fictional stories be presented for teaching historical content? What is the importance of audio in maintaining the attention and interest of the student user? These questions arose when we analyzed the participations of the user-evaluators when operating the educational game used in the research and were consolidated in the final analysis of the usability evaluations, leading us to organize categories and items related to the answers obtained in the tests, which resulted in the elaboration of a new instrument from the Game User Experience Satisfaction Scale – GUESS (Phan, Keebler & Chaparro, 2016).

E-GUESS

Based on the usability tests of the empirical phase of the research, the E-GUESS (Educational GUESS) was developed, an instrument designed specifically for the evaluation of educational games. GUESS was chosen as a result of a literature review conducted by Vieira, Silveira & Matins (2019). GUESS was developed and validated based on the evaluations of more than 450 exclusive video game titles in several popular genres. Thus, it can be applied to many types of video games in the industry, as a way of assessing which aspects of a game contribute to user satisfaction and as a tool to help users analyze their gaming experience. However, as stated by the authors the games evaluated in their research mostly consisted of popular commercial games that were designed purely to entertain. As a result, it was not known how applicable the GUESS will be in evaluating serious games (e.g., educational) (Phan, Keebler & Chaparro, 2016, p. 1239). Based on this assumption stated by the authors, we use this tool as a starting point for formulating a new tool that meets the needs of an educational game.

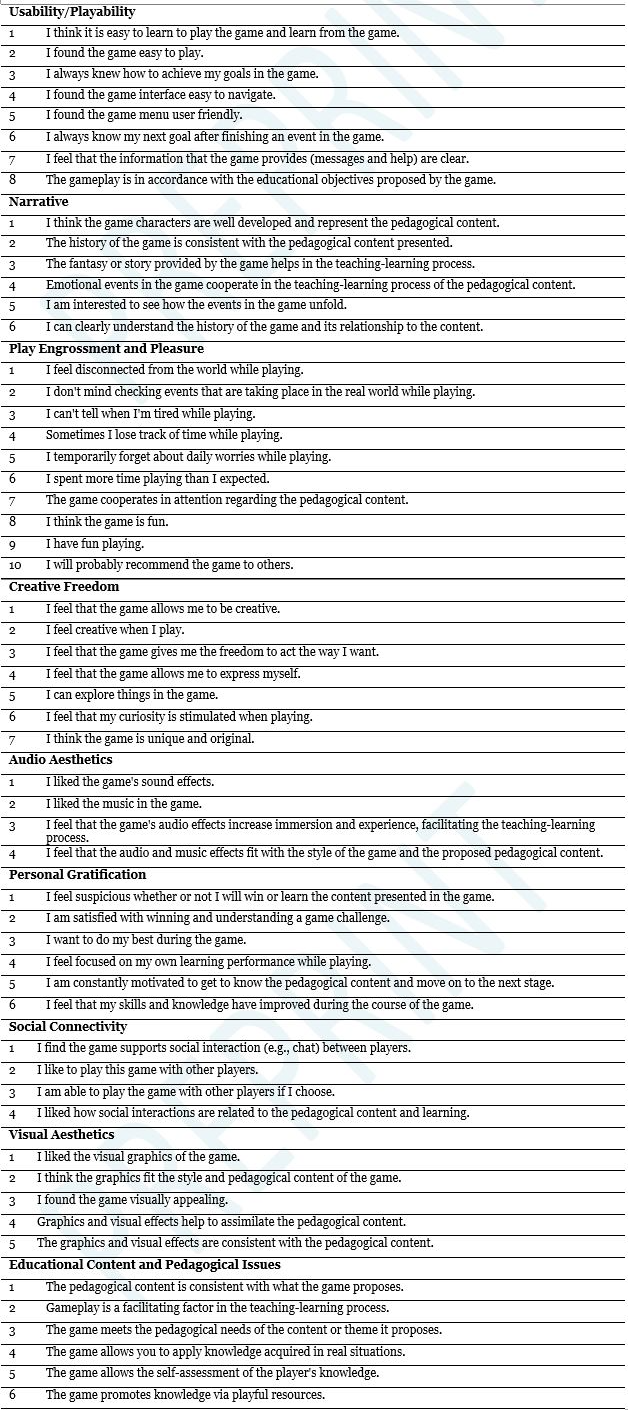

With Educational-GUESS, we introduced changes aimed at pedagogical issues and educational content, in addition to simplifying and reducing factors considered redundant in the original GUESS. The instrument also seeks to elucidate important points in the development of an educational game by allowing notes that are easily ignored during the design phase. The following table shows the modified categories of GUESS and E-GUESS. The comparative table of E-GUESS and GUESS is available in Annex I 2.

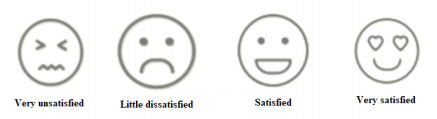

As a facilitator, for the evaluation of each item in the 9 categories, a scale of emojis was created to replace the original 1 to 4 grades from GUESS. Figure 3 shows the scale of emojis.

Source: Authors, 2020

In addition to a simplification of topics and categories, such as the combination of Engrossment and Pleasure Factors, elements related to the objective of the educational game, its ability to combine gameplay with learning, issues related to content and pedagogical needs were added.

Some categories, although they were not the result of direct data obtained from data collection, were identified from the observation of the behavior of the user-evaluators and the critical observation of other educational games available for download. They are: Creative Freedom and Social Connectivity. Although they are not present in the evaluated game, we identified that they are essential in several socio-interactionist approaches adopted in educational games. In addition to these, the Content and Pedagogical Questions categories were created, aimed at evaluating the teaching and learning aspects present in the game.

As presented by Squire (2011), a good educational game is vital for the student to remain engaged, excited, interactive, solve problems and learn school content while playing. Based on this premise and considering the observations made during the usability evaluation that we developed, we consider it relevant to highlight that, in the process of creating and developing educational games, the following guidelines:

- the development must be a collaborative work by designers, programmers and educators;

- the game should be fun; school / academic content should not be competing with the fun factor, but intrinsic;

- the stages of the game must seek aesthetic and gameplay sophistication, with different levels of challenges but that allow the learner not to give up learning because he cannot operate or advance in the game;

- the game must provide connection to social networks and group interactions;

- the player must remain interested but also challenged in his creativity.

Overcoming the supposed bipolarity between "fun" and "educational" is what the Content and Pedagogical Questions categories seek to identify and guide. The validity of E-GUESS must be confirmed in new research, with a larger and more diverse sample, so that each factor can be analyzed in detail.

CONCLUSION

Among the contributions to the technological mediated education field provided by the research that gave rise to this article, we highlight, in addition to the creation of the E-GUESS instrument, a systematic review on the evaluation of educational games, published by Vieira, Silveira & Martins (2019).

We found, while studying the literature, that factors such as Usability, Integration, Narrative and Gratification demand more studies on their impacts on digital educational games since there is little bibliography available or, in some cases, as Audio Factors in Video Games, none was found. The very approach of separating and categorizing such factors can be further discussed, as some are intrinsically connected when worked on an educational game.

This statement goes along with what Czauderna and Guardiola (2019) presents in their research: educational games is a complex venture because they are expected to fulfil two requirements that can be seem as contradictory: educational games should be as appealing as commercial games designed solely for entertainment, and they should provide their players with a learning experience related to educational domains. That conclusion matches with the previously guidelines proposed by Squire (2011) and our conclusions identified by our evaluations.

After identifying the absence of a specific instrument to assess the usability of educational games, we carried out the field investigation using GUESS and, based on the analysis and evaluations of the collected data, we elaborated the E-GUESS, which we disseminated to the community of technology researchers educational and electronic game developers as an instrument to be analyzed and improved, but also offers a set of heuristics for the production of digital educational games.

Another contribution of the research was the usability analysis performed for the educational game used in data collection. This game, which deals with the theme Periodic Table of Chemistry and is in the validation phase, received valuable contributions for adjustments in its gameplay. The identification and elucidation of the problems identified during the research will assist groups in the development of educational games at the institution where the data collections were carried out, in order to avoid repetition of errors and develop projects more efficiently.

For future works, we suggest validity studies to improve E-GUESS using different educational games. It is also important to validate it in games that cover educational content of different school levels, since usability factors are different in small children, pre-teen, teenagers, youth and adults. Cross-cultural studies will also be needed to verify the stability of categories in different cultures.

We thank the Research Support Foundation of the State of Minas Gerais (FAPEMIG) for funding part of the research and development of the analyzed Game.

REFERENCES

Allen, W. C. (2006). Overview and evolution of the ADDIE training system. Advances in Developing Human Resources, 8(4), 430-441. https://doi.org/10.1177/1523422306292942

Azevedo, D., Silveira, A. C., Lopes, C. O., Amaral, L. O., Goulard, I. C. V., & Martins, R. X (2018). Letramento digital: uma reflexão sobre o mito dos 'Nativos Digitais'. Renote. Revista Novas Tecnologias na Educação, 16, 1-11. https://doi.org/10.22456/1679-1916.89222

Barbacci, M., & Klein, M., Longstaff, T., & Weinstock, C. (1995). Quality Attributes (CMU/SEI-95-TR-021). http://resources.sei.cmu.edu/library/asset-view.cfm?AssetID=12433. https://doi.org/10.21236/ADA307888

Bruner, J. (2009). Interaction de guidage, étayage et développement. Université de Genève. http://www.unige.ch/fapse/SSE/teachers/crahay/PDA/Bruner

Busch, C., Claßnitz, S., Selmanagić, A., Steinicke, M. (2015). Developing and Testing a Mobile Learning Games Framework. Electronic Journal of e-Learning, 13(3), 151-166.

Czauderna, A., Guardiola, E. (2019). The Gameplay Loop Methodology as a Tool for Educational Game Design. Electronic Journal of e-Learning, 17(3), 201-227. https://doi.org/10.34190/JEL.17.3.004

Freitas, S. (2017). Are Games Effective Learning Tools? A Review of Educational Games. Educational Technology & Society, 21(2), 74-84.

Fisch, S., M. (2005). Making Educational Computer Games “Educational”. Proceedings of the 2005 conference on Interaction design and children. (pp. 56-61). ISBN:1-59593-096-5. https://doi.org/10.1145/1109540.1109548

Garcia-Ruiz, M. A., Xu, S., Santana-Mancilla, P. C., & Iniguez-Carrillo, A. L. (2020). Experiences in Teaching and Learning Video Game Testing with Post-mortem Analysis in a Game Development Course. In Proceedings of EdMedia + Innovate Learning (pp. 597-602). Online, The Netherlands: Association for the Advancement of Computing in Education (AACE). https://www.learntechlib.org/primary/p/217358/

Gunther, H. (2003). Como Elaborar um Questionário. Laboratório de Psicologia Ambiental Universidade de Brasília. Série: Planejamento de Pesquisa nas Ciências Sociais, Nº 01. Instituto de Psicologia.

Hawlitschek, A., & Joeckel, S. (2017). Increasing the effectiveness of digital educational games: The effects of a learning instruction on students learning, motivation and cognitive load. Computers in Human Behavior. Elsevier. https://doi.org/10.1016/j.chb.2017.01.040

Hermawati, S., & Lawson, G. (2016). Establishing usability heuristics for heuristics evaluation in a specific domain: Is there a consensus? Applied ergonomics, 56, 34-51. https://doi.org/10.1016/j.apergo.2015.11.016

Heinecke W., & Adamy, P. (2010). Evaluating Technology in Teacher Education: Lessons from the Preparing Tomorrow's Teachers for Technology. Research Methods in Educational Technology. Information. ISBN-10: 1607521350. ISBN-13: 978-1607521358.

IEEE Standard for Software Quality Assurance Processes," in IEEE Std 730-2014 (Revision of IEEE Std 730-2002), v.1. pp.1-138, 13 June 2014. https://doi.org/10.1109/IEEESTD.2014.6835311

Joyce, A. (2019). 10 Usability Heuristics Applied to Video Games. Nielsen Norman Group. https://www.nngroup.com/articles/usability-heuristics-applied-video-games/

Junior, A. M. (2006). O videogame nas aulas de educação física. Grupo de Pesquisas em Educação Física Escolar da FEUSP/CNPq.

Kielgast, S., & Hubbard, B. A. (1997). Valor agregado à informação: da teoria à prática. Ciência da informação, 26(3). https://doi.org/10.1590/S0100-19651997000300007

Lamb, R. L., Anneta, L. A., & Firestone, J. B. (2018). A meta-analysis with examination of moderators of student cognition affect, and learning outcomes while using serious educational games, serious games and simulations. Computer in Human Behavior. Issue. 80, 158-167. https://doi.org/10.1016/j.chb.2017.10.040

Lauesen, S. (2005). User Interface Design: A Software Engineering Perspective. Pearson/Addison-Wesley. ISBN: 0321181433, 9780321181435

Lévy, P. (2003). A inteligência coletiva: por uma antropologia do ciberespaço. 4.ed. São Paulo: Loyola.

Lope, R. P., Arcos, J. R. L., Medina-Medina, N., Paderewski, P., & Guiérrez-Vela, F. L. (2017). Design Methodology for Educational Games based on Graphical Notations: Designing Urano. Entertainment Computing, 18, 1-14. https://doi.org/10.1016/j.entcom.2016.08.005

Nielsen, J. (1994). Usability inspection methods. In Conference companion on Human factors in computing systems, (pp. 413-414). https://doi.org/10.1145/259963.260531

Nielsen, J. (2000). How to Conduct a Heuristic Evaluation. https://www.nngroup.com/articles/how-to-conduct-a-heuristic-evaluation/

Phan, M., Keebler, J., & Chaparro, B. (2016). The Development and Validation of the Game User Experience Satisfaction Scale (GUESS). Human Factors: The Journal of the Human Factors and Ergonomics Society, 58. https://doi.org/10.1177/0018720816669646

Ray, B. B., Powell, A., & Jacobsen, B. (2014). Exploring Preservice Teacher Perspectives on Video Games as Learning Tools. Journal of Digital Learning in Teacher Education, 31(1), 28-34. https://doi.org/10.1080/21532974.2015.979641

Root, R. W., & Draper, S. (1983). Questionnaires as a software evaluation tool. Proceedings of CHI 83, (pp. 83-87). New York: NY: ACM. https://doi.org/10.1145/800045.801586

Squire, K. (2011). Video Games and Learning: Teaching and Participatory Culture in the Digital Age (Technology, Education–Connections (The TEC Series)). Technology, Education–Connections (The TEC Series). Publisher: Teachers College. ISBN-10: 0807751987. ISBN-13: 978-0807751985.

Smolka, A. L. B. (2000). O (im)próprio e o (im)pertinente na apropriação das práticas sociais. Cadernos Cedes, 50, 26-40. https://doi.org/10.1590/S0101-32622000000100003

Sweller, J. (2002). Visualisation and instructional design. En R. Ploetzner (Ed.), Proceedings of the International Workshop on Dynamic Visualizations and Learning (pp. 1501-1510). Tübingen, Germany.

Vieira, E. A. O., Silveira, A. C., & Martins, R. X. (2019). Heuristic Evaluation on Usability of Educational Games: A Systematic Review. Informatics in Education, 18, 1-20. https://doi.org/10.15388/infedu.2019.20

Whitson, J. R. (2020). What Can We Learn from Studio Studies Ethnographies? A “Messy” Account of Game Development Materiality, Learning, and Expertise. Games and Culture, 15(3), 266-288. https://doi.org/10.1177/1555412018783320

Yeni, S., & Gagiltay, K., (2017). A heuristic evaluation to support the instructional and enjoyment aspects of a math game. Program electronic library and information systems, 51(4), 406-423. https://doi.org/10.1108/PROG-07-2016-0050

Zhonggen, Y. (2019). A Meta-Analysis of Use of Serious Games in Education over a Decade. Hindawi International Journal of Computer Games Technology. Article ID 4797032. https://doi.org/10.1155/2019/4797032

Notes

Notas de autor