Monográfico

Analytics for Action: Assessing effectiveness and impact of data informed interventions on online modules

Analíticas en acción: Evaluando la efectividad e impacto de intervenciones basadas en evidencia en cursos en línea

Analytics for Action: Assessing effectiveness and impact of data informed interventions on online modules

RIED. Revista Iberoamericana de Educación a Distancia, vol. 23, núm. 2, 2020

Asociación Iberoamericana de Educación Superior a Distancia

Recepción: 17 Enero 2020

Aprobación: 08 Febrero 2020

How to reference this article: Evans, G., e Hidalgo, R. (2020). Analytics for Action: Assessing effectiveness and impact of data informed interventions on online modules. RIED. Revista Iberoamericana de Educación a Distancia, 23(2),

pp. 103-125. doi: http://dx.doi.org/10.5944/ried.23.2.26450

Abstract: Investigating effectiveness of learning analytics is a major topic of research, with a recent systematic review finding 689 papers in this field (Larrabee Sonderlund et al., 2019). Few of these (11 out of 689) highlight the potential of interventions based on learning analytics. The Open University UK (OU) is one of few institutions to systematically develop and implement a learning analytics framework at scale. This paper reviews the impact of one part of this framework - the Analytics for Action (A4A) process, focusing on the 2017-18 academic year and reviewing both feedback from module teams and interventions coming out of the process. The A4A process includes hands-on training for staff, followed by data support meetings with educators when the course is live to students. The aim being to help educators with making informed, evidence-based interventions to aid student retention and engagement. Findings from this study indicate that participants are satisfied with the training and that the data support meetings are helping in providing new perspectives on the data. The scope and nature of actions taken by module teams varies widely, ranging from no intervention at all to interventions spanning over multiple presentations. In some cases, measuring the impact of the actions taken will require data analysis from further presentations. The paper also presents findings indicating room for improvement in the follow up of the actions agreed, support given to module teams to implement such actions and final evaluation of impact on student outcomes.

Keywords: learning analytics, analytics framework, learning design, interventions, evidence, impact.

Resumen: La efectividad del uso de las analíticas de aprendizaje es un tópico de gran relevancia en la literatura sobre el tema. Una revisión sistemática reciente encontró 689 artículos en este campo (Larrabee Sonderlund et al., 2019). Sin embargo, solamente 11 de los 689 artículos destacan el potencial de las intervenciones basadas directamente en el análisis de los datos disponibles. La Open University UK (OU) es una de las pocas instituciones que desarrolla e implementa sistemáticamente un marco de uso de las análiticas a gran escala. Este documento revisa el impacto de una parte de este marco: el proceso de Analytics for Action (A4A). Utilizando datos del curso académico 2017-18, revisamos los comentarios de los participantes y las intervenciones acordadas como parte del proceso. El proceso A4A implica la capacitación práctica del personal, seguida de reuniones sucesivas en las que se discuten los datos cuando el curso ya está disponible en línea. El objetivo del proceso es ayudar a los educadores a planificar y realizar intervenciones basadas en la evidencia, con el fin de mejorar la retención y satisfacción de los estudiantes. Los resultados de este estudio indican que los participantes están satisfechos con la capacitación y que las reuniones de apoyo están ayudando a proporcionar nuevas perspectivas sobre los datos. El alcance y la naturaleza de las intervenciones varían ampliamente, desde la no intervención hasta intervenciones que abarcan múltiples presentaciones (cohortes) del curso. En algunos casos, medir el impacto real de las acciones tomadas requerirá el análisis de los datos de otras presentaciones. El trabajo también presenta hallazgos que indican que todavía hay margen para mejorar el seguimiento de las acciones acordadas, el apoyo brindado a los equipos académicos para implementar tales acciones y la evaluación final del impacto en los resultados y satisfacción de los estudiantes.

Palabras clave: analíticas de aprendizaje, marco analítico, diseño de aprendizaje, intervenciones, evidencia, impacto.

The Open University (OU) is the largest University in the UK, offering to its students high quality higher education via distance learning. Since its creation in 1969, over 2 million students from 157 countries worldwide have registered for studying at the OU.

The OU offers undergraduate and postgraduate degrees. All degrees, except some research doctorates, are studied in the distance learning modality. The curricula is organised by modules (courses).

Each module is produced and managed by a multidisciplinary team, led by an academic leader : the Module Team Chair (MTC). This team is known as the Module Team (MT). A typical undergraduate module is worth 30 or 60 credits, and a typical Bachelor degree is conceded when the student has completed 360 credits. All module contents and activities are available online via the OU’s Virtual Learning Enviroment (VLE), which is a customised version of Moodle. Some modules still provide printed material, but this content is always also available online. Modules are assessed using a combination of quizes, Computer Marked Assessments (iCMAs), Tutor Marked Assessments (TMAs), End Of Module Assessment (EMAs), Projects and Exams.

The OU has been systematically using learning analytics to improve students’ outcomes since, at least, 2014, when the OU initiated its Learning Analytics programme. One of the main components of the programme was the Analytics for Action (A4A) process, which promoted the systematic collection and analysis of the data with the objective of improving the design of the University´s modules and, subsequently, the student’s outcomes, using the A4A evaluation framework to structure the process (Rienties et al., 2016). After a two-year pilot, a decision was made to mainstream the A4A approach into business as usual activity in the 2016-17 academic year. The Learning Design team (LDT) was selected to run the process as the team members had expertise in Learning Design, were familiar with the data and, in most cases, had already worked on design of the participant modules.

The A4A process included the provision of training for participating staff and a series of data support meetings (DSMs) among academics, support staff, data analysts and learning designers. At these meetings the available data were reviewed, and specific actions were agreed to address the issues found. The training covered the basis of the A4A framework and the use of the basic data tools.

In a typical data support meeting, the data reviewed includes the Key Performance Indicators (KPIs), the profile and study record of the students registered in the module, the assessment submissions and results, the retention and withdrawals data and the students interaction with the VLE. Additional data could be also included for discussion at the meeting. For each of these meetings, the LDT prepared an analysis of the data and a comprehensive report was circulated afterwards. These reports contained a summary of the data and the discussions, plus recommendations and possible actions for both the MT and the LDT.

In the 2017-18 A4A cycle, the LDT provided support to 49 modules across all faculties, reaching over 35,000 students. This represented an increase of 69 % in the number of modules and 40% in the total student population reached compared to the previous cycle (2016-17). A total of 136 module support meetings were held and 20 training sessions were delivered to a total of 128 staff. Out of the 49 modules included in A4A, 43 were offered three DSMs during their presentation. The remaining six modules were offered an alternative ‘light touch’ process. As no formal records are kept from the surgery style sessions, the data from participant modules in that modality are not included in this report.

LITERATURE REVIEW

There are a wealth of studies looking at learning analytics in its broadest sense. Furthermore, it is now embedded in the plans of numerous higher education institutions worldwide. A recent systematic review (Larrabee Sonderlund et al., 2019) found 689 papers relating to effectiveness of learning analytics. The same review found only 11 that highlighted the potential of interventions. As noted in the review, each of the final papers analysed more closely take a similar approach of using analytics to identify at-risk students and to disseminate that information to students and tutors (Larrabee Sonderlund et al., 2019).

The approach taken at the OU toward ongoing analysis and developing interventions is based on the Analytics for Action framework (Rienties et al., 2016) and utilises the Community of Inquiry methodology, initially developed by Garrison et al. (2000; 2007) as a guiding principle for categorising types of intervention.

Looking more closely at the existing literature around learning analytics programmes, there is literature investigating impact at many levels and with differing results. Drachsler et al. (2014) look at the impact across the whole Dutch education system, whereas others such as Dawson et al. (2017) examine the impact of a specific learning analytics programme on student retention, using a predictive model to identify at-risk students and to make supportive interventions. Their work found positive association between the intervention and retention, but statistical methods found low to no effect of the intervention. A study by Kostagiolas et al. (2019) undertook a survey of students at a Greek university to explore the relationship between student satisfaction, self-efficacy and retention. The work found a correlation between student satisfaction, self-efficacy and student retention whilst also evaluating how academic information resources fulfil student information needs. Coming back to the OU context, a further study by Rienties and Toetenel (2016) also used learning analytics to analyse the impact on student retention of different Learning Design approaches, indicating that student behaviour was strongly predicted by the learning design of the course and that communicative activities and social learning was a particularly strong predictor of student success.

A number of other studies have been successful in finding a link between specific interventions at course level and improved retention or student performance (Fritz, 2011; Kim et al., 2016; Lu et al., 2017). Lu et al. (2017) found a 17.4% better performance from an experimental group where instructors were receiving analytics reports to inform their advice to students than the control group where no such reports were provided. The study by Kim et al. (2016) investigated student use of learning analytics dashboards and found that lower performing students were more motivated by their use of the dashboard than higher performing students. Finally, Fritz (2011) found from evaluation of a “check my activity” tool (CMA) enabling students to check their LMS engagement with that of other students, that 91.5% of students used CMA at least once, and compared to students who did not use the tool throughout the semester, these students were 1.92 times more likely to earn a C or above (Fritz, 2011).

As Rienties et al. (2016) flag in their paper about three case studies of learning analytics interventions, one of the largest challenges for the field of learning analytics research and practice is how to put the power of learning analytics into the hands of teachers and administrators. This points to the question of adoption at both institutional and practitioner level. Ferguson et al. (2015) identify that analytics implementation requires change of practice across educators, learners, support staff, library staff, administrators and IT staff. The study also links back to findings from 40 years ago highlighting that “Researchers should get clients politically, emotionally, and financially committed to the outcome of the research. They are then more likely to take notice of its results” (McIntosh, 1979, cited in Ferguson et al. 2015). Dawson et al. (2018) unpick this further by drawing on complexity theory and seeing the need for institutions to implement learning analytics with an awareness both of the complexity of the institution and of the change to be brought about by implementing learning analytics.

METHODS

Aim and objectives. Research questions

Our objective with this review was to answer the following three research questions:

- RQ1. Are the DSMs matching the expectations from faculty staff involved in the process?

- RQ2. Are faculty staff satisfied with the content and delivery of the training sessions?

- RQ3. Is there any measurable impact of the actions taken after advice provided to faculty staff at the DSMs?

Methodological approach

In order to answer the research questions above, there were three key activities undertaken to provide the required evidence:

For the first question (RQ1), relating to expectations of faculty staff with DSMs: in order to evaluate if the meetings matched the expectations from faculty staff, we invited the faculty staff involved (usually MTC and Curriculum manager) to complete an anonymous online survey after the final support meeting. We received in total 17 responses to this online survey. Among the respondents were 13 MTCs and 4 Curriculum managers. The online survey included Likert scale questions as well as free text answers.

For the second question (RQ2), relating to satisfaction with training sessions: in order to evaluate the quality and pertinence of the training sessions, we asked the trainees to complete a questionnaire at the end of each session. In this questionnaire we asked 10 Likert scale questions (where 1 = totally disagree to 5 = strongly agree), and two free text response questions. We received and analysed 106 responses.

For the third question (RQ3) relating to impact of actions taken following DSMs: after each DSM, the LDT circulated a full report that included the data covered at the meeting, the discussions about the data in the context of the module performance and the actions agreed. The actions were allocated to either the MT or the LDT.

For a sample (7 out of 43, all from the STEM Faculty) of the participant modules we reviewed the meeting reports and identified the actions agreed. We then asked MT members whether these actions were taken and reviewed the available data in search of any measurable impact.

RESULTS AND DISCUSSIONS

For the DSMs : A total of 136 DSMs were held in 2017-18. A large proportion of the second meetings needed to be rescheduled due to strike actions at the the OU. Whilst all meetings were successfully rescheduled, the knock-on effect of these delays meant that the third and final meeting happened much later than originally planned, affecting the chances of introducing changes within presentation.

Most attendees were satisfied with the data support meeting provision. The attitude and knowledge of the trainers were highly regarded. No respondent expressed dissatisfaction with the meetings, and only 2 provided a “neutral” response.

While 88% of all respondents agreed the facilitators provided clear interpretation of the data, this figure dropped to 76% when asked about identifying actions.

Table 1 shows the answers to the Likert scale questions (Q1, Q2, Q3 and Q5) in the survey:

| Q | % All respondents (n=17) | Strongly agree/agree with the quoted statement: |

| 1 | 100 % | “The facilitators were enthusiastic in the support meetings” |

| 2 | 88% | “The facilitators provided a clear interpretation of my module’s data” |

| 3 | 76% | “The facilitators helped me identify an issue or action that could be taken on my module” |

| 5 | 87% | “Overall I am satisfied with the support meetings” |

Question 4 was a free text answer related to whether new perspectives of the data were identified in the interpretation by the meeting facilitators. Table 2 shows positive and negative comments on the data interpretation provided by the facilitators.

| Question 4: “Did the interpretation of the data by the facilitators provide a new perspective of the data that you hadn’t considered before?” | |

| Positives = 10 (62.5%) | Negatives = 6 (38.5%) |

| Yes (4 times) | No (4 times) |

| “In one or two cases.” | “Not really. It was interesting to review the module but there were few surprises.” |

| “Good to have examples from non-FBL modules.” | “Not really, but it was useful to talk it through.” |

| “Some new, but also reinforced earlier perspectives” | |

| “Yes, regarding early virtual learning environment (VLE) viewing of the ANALYTICS FOR ACTION guide”. | |

| “Yes, especially with respect to VLE traffic.” | |

| “Sometimes, but as both D and I spent a reasonable amount of time looking at the analytics ourselves, often they were reinforcing the same.” | |

The survey also included two questions (Q6 and Q7) with open/free text answers, which asked about the aspects of the meetings that worked well and what could be improved.

Q6. What did you like about the support meeting?

When asked this question, attendees made comments related to:

- Facilitators attitude and knowledge (10 comments). For example, “Clear presentation of data, clear understanding of what data sources meant, everyone on similar page as to what we’re trying to achieve, willingness to go beyond standard analytics for us”.

- The time and space to review the data (4 comments). For example, “The meetings were a good place to sound out ideas and different theories as to why students withdraw or don’t progress as we expected. The atmosphere was one of learning by doing, and by learning together with colleagues who were supportive” and “They provided clear guidance on the use of analytics that can be used to improve modules”.

- Data provision and visualisation (4 comments). For example, “They took on board our queries and found ways of reporting back at the next session with additional information, very helpful” and “Nice to see some visualisations of the data”.

Q7. What could we do to improve the support meetings?

When asked this question, the attendees made comments related to:

- Data systems and Data provision (4 responses). For example, “The technology didn’t work all the time, so in the meeting the analytics SAS website went down. Some meetings were joined on-line, and it was difficult to see the ‘live’ data remotely”.

- Meeting preparation/customisation (4 responses). For example, “In some cases I felt a bit rushed and wished we had more time to look over and analyse the results and trends, but I do respect the idea that it is difficult to get all these meetings into the presentation diary. Having more cross-referenced data would be great – for example knowing all the characteristics of students likely to not succeed (e.g. who are our target groups for support?) could be really useful, especially at the front end of the module. Also knowing when and how to share findings with our tutors could also be considered – we need to progress in that area if we can”.

- Follow Up (3 responses). For example, “I also felt that we sometimes left the meeting without a clear plan of what we were going to do. Obviously, there wasn’t time to cover that in the meeting, but follow-up meetings between ourselves to discuss actual changes should have been built in to the approach as a ‘requirement’ for participation. Obviously, it was up to (us to) put this as an agenda on our own meetings, but these were not necessarily at a good time to fit in with the data support meetings”.

- Nothing to improve (3 responses). For example, “Can’t think of anything. Facilitators were open to suggestions and followed up with actions after each meeting”.Institutional constraints (2 responses). For example, “Perhaps make a bigger deal out of them, e.g. promote them a bit more with MT”.

- Facilitator’s knowledge/attitude (1 response). For example, “There were some aspects that the facilitators were not clear about, for example, from what point the retention data was calculated. They also had some preconceptions about things, e.g. that a lot of participation in the Student Forum was a good thing, when, in fact, more participation was usually related to issues and problems – students have a tutor group forum for interaction with each other as well as Facebook and other self-initiated groups for mutual support”.

For the training sessions: a total of 128 staff attended the 20 regular A4A training sessions between October 2017 and July 2018. From October to December there were weekly sessions exclusively for MT members of those modules selected for A4A, followed by bi-weekly sessions open to all staff. The training offered trainees the opportunity to use the data tools on live, current data related to any specific module of interest. The sessions were restricted to a maximum of 12 trainees per session and the ratio of trainers to trainees was kept to a maximum of 6:1. Table 3 shows the average score for each question and the percentage of respondents that totally agreed/agreed with each question statement.

| Statement | Ave. score all faculties/5 | % Agree/Strongly agree among all respondents |

| Q1: Learning to operate the data tools used in the training session was easy for me. | 4.30 | 91.4 |

| Q2: I found it easy to get the data tools used in the training session to do what I want them to do. | 4.11 | 86.7 |

| Q3: I found the data tools used in the training session easy to use. | 4.14 | 84.8 |

| Q4: Using the data tools from the training session will improve my teaching. | 3.96 | 72.9 |

| Q5: Using the data tools from the training session will increase my productivity. | 3.69 | 58.0 |

| Q6: Using the data tools from the training session will enhance my effectiveness in teaching. | 3.92 | 67.4 |

| Q7: Based upon my experience with the data tools used in the training session, I expect that most staff will need formal training to use these tools. | 3.80 | 66.7 |

| Q8: The instructors were enthusiastic in the training session. | 4.46 | 92.5 |

| Q9: The instructors provided clear instructions about what to do. | 4.54 | 93.4 |

| Q10: Overall, I am satisfied with the training session. | 4.50 | 91.5 |

Additionally, 8.6% provided a neutral response for Q10. No trainee expressed dissatisfaction with the training sessions. Trainers’ attitude and the instructions they provided (Q8 and Q9) were highly regarded with, at least, 92.5% of trainees agreeing with the correspondent statements and an average score of 4.46 (89.2%) and 4.54 (90.8%) for Q8 and Q9 respectively.

Trainees found the data tools easy to use and reported that they could get the data tools to do what they wanted, with an average of 87.6% of trainees totally agreeing or agreeing with the statements in Q1-Q3.

Both scores and proportion of trainees totally agreeing or agreeing with the statements in questions Q4, Q5 and Q6 are consistently lower than for the rest of the questions. This could be because these questions were related to improving productivity and effectiveness in teaching, and a significant proportion of trainees are academic support staff rather than teachers. Two thirds of all respondents agreed with the statement in Q7, that most staff will need formal training to use these tools.

Q11 and Q12 were free text response questions, which were answered as follows:

Q11. What did you like about the training session?

When asked this question, trainees commented on the hands-on approach and practical experience of the training exercise, the instructions provided, the opportunity to explore and experiment with the data tools, the quality and relevance of the advice provided by the trainers, the relevancy of the data (particularly the fact that the data was coming from their own modules) and the pace of the session. A few trainees mentioned the workbook provided to each trainee and considered it to be a good idea. Table 4 shows the frequency of each comment.

| When asked “What did you like about the training session?” | |

| Quoted | Frequency |

| “Hands-on and practical exercises” | 24 |

| “Experiment and explore” | 24 |

| “Clear information/good response to questions” | 20 |

| “Practical exercises” | 17 |

| “Clear structure and helpful instructions” | 16 |

| “Being able to look at own modules for training / Relevant” | 12 |

| “Useful” | 8 |

| “Workbook a good idea” | 4 |

Q12. What could we do to further improve the training sessions?

When asked this question, trainees mentioned:

- ‘system issues’ (25 responses): the overall speed of the system was the most mentioned issue and was considered as a blocker for working with data

- ‘training style’ and ‘materials’ was mentioned 20 times: more worked examples, sending the slides after the session, providing a printout of the presentation and/or providing it in advance

- ‘session length’ and requests for sessions targeted at advanced and beginners were mentioned 12 times, with more data analysis mentioned several times.

Table 5 shows the frequency of each comment:

| When asked “What could we do to further improve the training sessions? | |

| Quoted | Respondents |

| Computer/network/tools/dashboards issues | 25 |

| Training style and materials – advice and comments | 20 |

| Session length/suggestions for beginners/advanced sessions | 12 |

| Tool improvements needed/suggestions | 7 |

For impact of the actions taken after advice provided via DSMs:

In this section, we reviewed the actions taken by a sample of the participant MTs which related to the discussions held within the A4A process. Each example outlines the actions taken, the results, future planned actions and provides info on how those future planned actions will be evaluated. Rather than summarising the data, we have taken the decision to present the findings from each of the modules separately. This provides some insight for the reader in how the discussion unfolds for each module and to demonstrate the link between each section. It also demonstrates the difference in scale between the interventions.

As some of the data being presented contains sensitive information this data has been anonymised.

Module 1 2017

Actions taken by MT:

Assignment 1 for the following presentation (2018) was changed significantly (reduced in size and scope) based on the submission data and feedback from Associate Lecturers. The submission date was changed from week 7 to week 6, and the weighting remained the same as before (3%).

Results:

The assignment submission rates increased by 0.8% for Assignment 1 whilst Assignment 2 also saw an increase of 1.31%.

Future planned actions:

- The MT will be doing similar work on Assignment 2 for 2019. The MT and LDT will monitor submission rates for both assignments and investigate any other factors that could have had an impact on submission rates, beyond the changes introduced

- For students going on to Level 2, the MT is working to secure proactive interventions from the Student Support Team (SST) to encourage registrations next year.

- Looking to get more of the Level 2 MTs to talk to our students too. Students had some choice point tutorials in 2018, and MT intend to include more of these in 2019.

Assessing results by:

- Measuring the submission rates for both assignments in Module 1 2019.

- Measuring the number of Module 1 students registering for level 2 modules in 2019, and comparing with the 2018 results

Figure 1 shows the number of Module 1 2017 students achieving a pass grade sorted by their 2018 module registration choice.

Module 2 2017

Actions taken by MT:

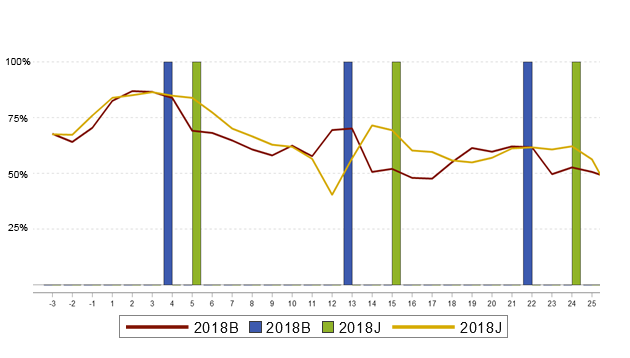

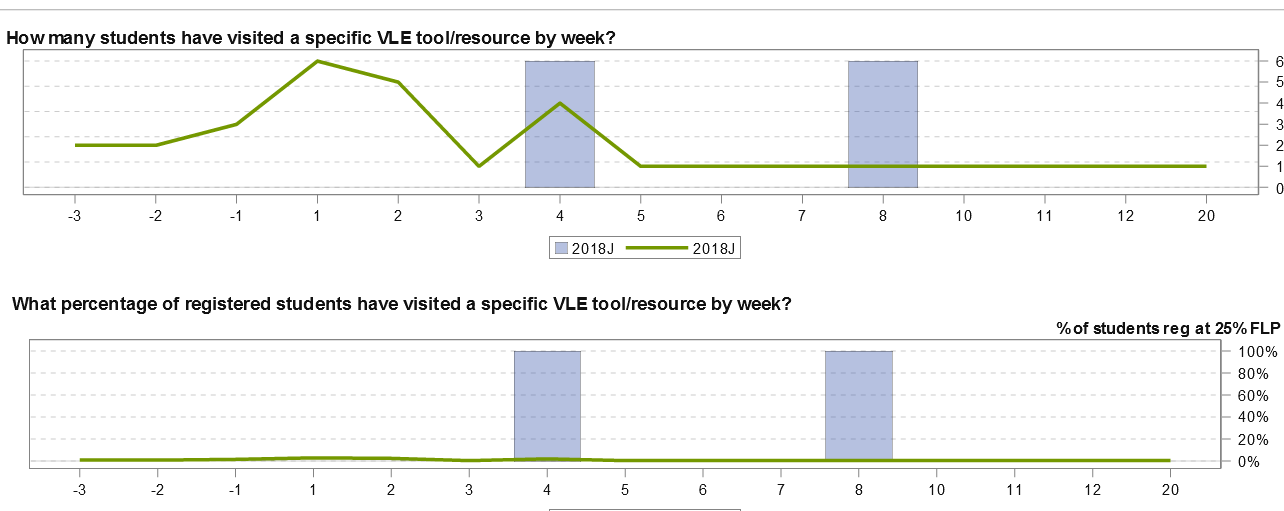

At the second data support meeting, a declining trend in VLE usage was detected between weeks 9 and 12 (Christmas break). The LDT suggested a review of the timing of the first two assignments, with the hypothesis that bringing them closer together would have a positive impact on the trend. However, it was considered too difficult to change the timing of assignments for 2018. Instead a bridging video was introduced to “… prepare and reassure students, and to dispel some of the anxiety around anatomical terminology related to the human nervous system”.LDT also reported an increase in student withdrawals after the cut-off for Assignment 01. The possible reasons for this were discussed but after further investigation, it became clear that most of these students had not submitted the first assignment.Concurrency issues were also reported, particularly with Module 2b and Module 2c, as these modules have very similar assessment dates around weeks 7-8. After consideration, the MT concluded that this situation will cease to be an issue when Modules 2b and 2c are replaced by new modules in 2020

Results:

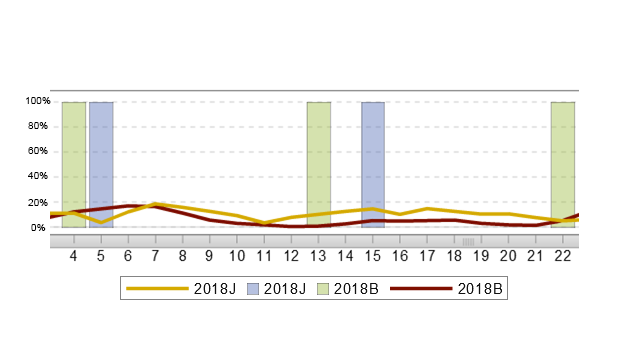

Although the bridging video was introduced in 2018, there was no change in the VLE engagement pattern for the same period in that year. The decline in VLE engagement between the Assignment 01 submission date and Christmas can be seen in Figure 2. Engagement levels bounce back but never reach the pre-Christmas levels.

Module 3 2018

Actions taken by MT:

- Changed topic-based cluster forums, maths cluster forum, and Python (programming language) cluster forum into module wide forums for the second presentation in 2018

-

To improve student engagement with Python content the MT:

- Updated some text in the Python weeks to clarify things – especially how to study Python (taking notes) and more specific guidance on the activities for Assignment 04.

- Produced an additional screencast video to demonstrate how to build up larger Python programs.

- Changed Activity 3.2 in Python Activity 1 to be about explaining a program (rather than writing one) and include the response to this activity as an extra question in Assignment 02.

- Added a question 10 to the exam (and specimen exam paper) about explaining a brief Python program related to the screencast mentioned in (ii).

- Module Chair to make weekly videocasts (1-1.5 minutes) filmed on iPhone and uploaded to VLE (with transcripts) pointing out the key things coming up in the next week of study.

Results:

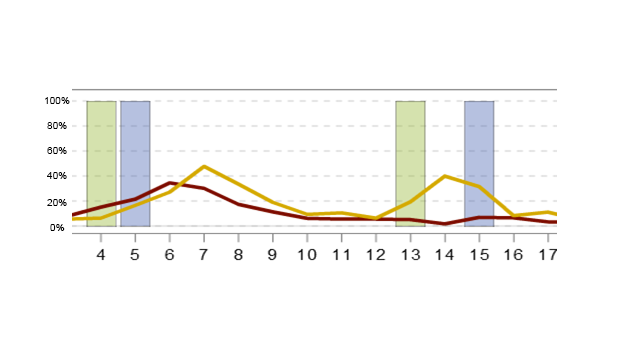

- Python cluster forum shows an increase in student engagement from week 7 to week 22, in the second presentation for 2018 (18J) compared to the first in 2018 (18B)

Also, Maths support forum has a slight increase in usage compared to 18B.

- Python activity 1 shows an increase –from 35% to 48%– in the level of student engagement with the corresponding resource at the week the activity was due. It also shows students in 18J revisiting the resource when preparing for Assignment 02.

Figure 5 shows VLE engagement has been slightly higher for 18J, but differences may be related to presentation pattern (J vs B).

Future/planned actions:

LDT to develop a report that shows current progress vs planned progress for a cohort and link it to final students’ outcome.

Assessing results:

Monitor whether there is any increase in the proportion of students progressing as planned.

Module 4 2017

Actions taken by MT:

- Concurrent study monitored. ‘At risk’ students referred to SST.

- MT has developed additional preparatory materials linked to a diagnostic quiz.

- The MT produced additional mathematics support for Blocks 4 and 5.

-

Workload - signposting materials were produced, covering:

- pre-requisite knowledge/conceptual understanding required to study the block

- key points regularly examined

- material that is crucial to learning but not directly tested

- material written for interest only.

- Regular assessment reminders were provided via module news

Results:

- By week 23, 45% of the 2017 student cohort were studying more than one module concurrently, and 17% were studying 120 credits. At the same point in the 2018 presentation, these indicators were 41% and 23%, respectively.

- Access to the new diagnostic was provided via link to a pdf on the module website. However, engagement with ‘Are you ready for’ resource was still very low.

- Additional mathematics support for blocks 4 and 5 was provided via an additional module-wide online tutorial just prior to the start of Block 4 (12 November). The tutors provided a set of questions to be completed before the session. Figure 7 shows that this online tutorial –in orange– was attended by 21 students (out of 186 registered)

- Clear peaks in workload were identified with excessive direct teaching. Efectiveness of the changes expected to be visible for next presentation.

- Result expected to be measurable in the next presentation.

Future planned actions:

- MT and LDT to review the “Are you ready for” material for 2019, in order to increase student engagement.

- MT to consider reviewing assessment timings before and after the Christmas break, with the aim of shortening the periods between Assignment 02, the Christmas break and Assignment 03.

- MT actively looking for ways to trim materials to reduce workload. Tutor feedback about topics that can be removed has been sought

Assessing results:

Measuring Assignment 03 submission rate.

Module 5 2017

Actions taken by MT:

- Using predictive analytics data, in 2018 the MT identified students who did not complete Assignment 01 or achieved a low score as being at risk. In total, 25 at-risk students were identified.

- MT wanted to investigate pass rates for students in Scotland, to understand whether the lower fee level paid by Scottish students has any impact on incentive to pass the module.

- MT has collated assessment dates for all relevant modules and presented in a one-page view grid. Conflicts are worst for Module 5b and Module 5c.

- MT considered moving Assignment 02 to an earlier date, considering changes made to Module 5d and Module 5e assessment (removal of Assignment 03). However, it was found that Module 5 Assignment 02 date is difficult to move since students must study a specific topic in the preceding week. If moved closer to Assignment 03, then the desired equal spacing between the assignments will not be achieved.

- The Module 5 exam questions were reviewed by the MT, as suggested by Learning Design, but they appear fair and stable.

- MT planned to provide extra support to students who have banked assessment. In the 2018 presentation, 13 students were identified, and the MT has started to compile their progress and propensity to pass the module, but further work is required.

Results:

- All 25 students were contacted by the SST.

- Data reviewed showed this was not the case. Retention for Module 5 2017 in Scotland retention is 76% compared to 73% for whole cohort. The 2018 cohort shows a similar trend.

- MT using and updating the grid to minimise clashes.

- No changes to Assignment 02 dates.

- No major changes to Exams.

- Preliminary data suggests that students who bank their assessments for summative quiz 01 and Assignment 01 are much likely to succeed that those who only banked summative quiz 01.

Future/planned actions:

- MT to keep tracking student behaviour around assessment banking and their outcomes, and then consider if a change of rules is required for assessment banking.

- Single component assessment will be implemented for Module 5 in 2019

- MT to contact other modules in the grid to inform them about assessment clashes.

Assessing results:

- Measuring overall retention.

- Comparing the outcome for students in 2017 that didn’t do or achieved a low score in Assignment 01 – with the results of the 2018 students contacted by the SST.

Module 6 2017

Actions taken by MT:

The MTC actively monitored formative quiz scores and submission rates, as there were concerns about student engagement levels. These assessments are formative but there is a threshold relating to the number of quizzes students need to complete in order to pass the module. This was a concern because Module 6b and 6c had reported problems with students failing solely due to not completing enough formative quizzes.

Results:

- Students were using the quizzes to recap and revise the module material. In the end, the number of students and the number of attempts of quizzes were both high. Although students interacted with the quizzes differently to how the MT envisaged, there was no need to change them because overall engagement was good.

- The issue with formative quizzes observed in Module 6b and Module 6c was not present in Module 6b. No students failed Module 6b due to non-completion of the required number of formative quizzes.

Future/planned actions:

MT to continue actively monitoring formative quizzes.LDT to analyse the end of module survey results.

Assessing results:

Measuring and comparing formative quiz submission rates.

Module 7 2017

Actions taken by MT:

Module 7b and Module 7 should not be studied at the same time, however a few students were registered to study both concurrently. LDT suggested the use of an ‘Are you ready for’ diagnostic quiz.Due to decreasing student satisfaction scores, LDT suggested the MT may wish to consider use of other data collection tools available to explore students’ experiences of learning on Module 7.

Results:

Since Module 7 has only one presentation left (2019), evaluation based on questionnaires will be considered for the replacement module, Module 7c

Future/planned actions:

Use Module 7 collected data to inform Module 7c design.

Assessing results:

Measuring pass, completion and satisfaction rates for Module 7c and comparing with historical figures for Module 7

CONCLUSIONS

To revisit the research questions from earlier, this paper has found favourable results relating to both research questions 1 and 2. In terms of research question 3, the results are more variable as action has not always been taken by MTs; however, there is evidence of impact where actions have been taken and one example of lack of impact where a team has taken a different action to that recommended to them.

The responses to the training questionnaire show a clear positive response toward the training, with 91.5% of staff saying that they were satisfied with the training. This is also reflected in the positive comments outlining that staff appreciated being able to experiment and explore, and the hands-on and practical exercises.

Satisfaction levels with the DSMs are also strong, with 87% of staff expressing satisfaction with the meetings. The analysis of the qualitative feedback has also provided insight for the LDT into which aspects of the meeting are working and areas for improvement –a theme that stands out is around having a limited time in the meeting and that there was not always a clear plan for the MT coming out of the meeting. This is something that is being taken forward in the recommendations for ongoing work– to allow time for the meetings and to include space for outlining the recommended next steps. On the positive side, there is a clear recognition of the knowledge of the facilitator, with 10 responses flagging that the facilitator was knowledgeable and willing to push deep into the data to support the team with their analysis.

Assessing the impact of the actions taken as a result of the process, research question 3 reveals a mixed situation.

On modules 1 & 3 there was an impact on retention (module 1) and student engagement (module 3). These were positive impacts that we can draw back to the interventions made by the module teams as a result of acting on learning analytics findings. On module 2, there was no discernible impact. However, this was an action the module team took having decided the approach suggested in the data support meeting was too complex. In itself this was a useful finding for the OU as it provides evidence that such action does not lead to any impact. This can now be taken forward as part of an internal evidence bank for similar future scenarios.

Modules 4, 5 and 6 are useful examples of an emerging conversation led by review of the learning analytics. We can see these as examples of teams who are not quite ready to take action as they do not fully understand the issues and need the analytics to provide more data or see a different reason for the problems being encountered. Again, these are useful examples as the learning analytics has been able to prove/disprove hypotheses and enable the team to focus in on the problem.

Module 7 is a different case study and shows the importance of targeting the A4A resource at the correct modules. In this case a recommendation has been made to the module team; however, they have pushed it onto the upcoming replacement module. There are similar experiences where a team toward the end of the module lifecycle have positively engage with data capture and used the analysis to inform the new module. There is a task here to refine the selection process of modules for A4A to ensure teams buy-in to the need to respond positively to proposed actions.

Module teams that engage more fully with the A4A process are likely to get more insight and results from it and could become champions at faculty or Board of Studies level. Academic staff involvement is essential for the success of A4A, as they are responsible for ensuring that agreed interventions are actioned.

Beyond the research questions and looking at impact within the OU, other University –wide organisational processes related to quality control have adopted elements of the A4A methodology in their approach– such as the regular monitoring of the data, the provision of basic data training and the use of a menu of actions available.

The information regarding the actions taken was collected by contacting the corresponding MT and asking them to report on their modules. This may not be scalable to all modules. A more systematic process for the follow up and evaluation of the actions taken is required to assess the overall impact of A4A. The details of an enhanced process should be agreed between the LDT and the faculties. This would involve the provision of further resources both from the faculties and the LDT.

Further research is required to obtain a better understanding of the benefits derived from the process. In-depth interviews with key participants and independent evaluation are recommended.

Annex 1 provides an outline of the conclusions and recommendations fed back into the University from the internal version of the report. Annexes 2 and 3 show the questions included in the surveys to assess training and DSMs.

REFERENCES

Dawson, S., Jovanovic, J., Gašević, D., & Pardo, A. (2017). From prediction to impact: evaluation of a learning analytics retention program, Proceedings of the Seventh International Learning Analytics & Knowledge Conference. ACM, (pp. 474-478). doi: 10.1145/3027385.3027405.

Dawson, S., Poquet, O., Colvin, C., Rogers, T., Pardo, A., & Gasevic, D. (2018). Rethinking learning analytics adoption through complexity leadership theory, ‘ACM International Conference Proceeding Series’, Association for Computing Machinery, (pp. 236-244). doi: 10.1145/3170358.3170375.

Drachsler, H., Stoyanov, S., & Specht, M. (2014). The impact of learning analytics on the Dutch education system. Proceedings of the Fourth International Conference on learning analytics and knowledge. ACM, (pp. 158-162). doi: 10.1145/2567574.2567617.

Ferguson, R., Macfadyen, L. P., Clow, D., Tynan, B., Alexander, S., & Dawson, S. (2015). Setting learning analytics in context: overcoming the barriers to large-scale adoption. Journal of Learning Analytics, 1(3) 120-144.

Fritz, J. (2011). Classroom walls that talk: Using online course activity data of successful students to raise self-awareness of underperforming peers. The Internet and Higher Education, 14(2), 89-97.

Garrison, D., Anderson, T., & Archer, W. (2000). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2), 87-105. http://dx.doi.org/10.1016/S1096-7516(00)00016-6

Garrison, D., & Arbaugh, J. B. (2007). Researching the community of inquiry framework: Review, issues, and future directions. The Internet and Higher Education, 10(3): 157-172. http://dx.doi.org/10.1016/j.iheduc.2007.04.001

Kim, J., Jo, I. H., & Park, Y. (2016). Effects of learning analytics dashboard: Analyzing the relations among dashboard utilization, satisfaction, and learning achievement. Asia Pacific Education Review, 17, 13-24.

Kostagiolas, P., Lavranos, C., & Korfiatis, N. (2019). Learning analytics: Survey data for measuring the impact of study satisfaction on students' academic self-efficacy and performance, Data in brief, 25, doi: 10.1016/j.dib.2019.104051.

Larrabee Sønderlund, A., Hughes, E., & Smith, E. (2019). The efficacy of learning analytics interventions in higher education: A systematic review. British Journal of Educational Technology, 50(5), 2594-2618, doi: 10.1111/bjet.12720.

Lu, O. H. T., Huang, J. C. H., Huang, A. Y. Q., & Yang, S. J. H. (2017). Applying learning analytics for improving students’ engagement and learning outcomes in an MOOCs enabled collaborative programming course. Interactive Learning Environments, 25(2), 220-234.

Rienties, B., Boroowa, A., Cross, S., Farrington-Flint, L., Herodotou, C., Prescott, L., Mayles, K., Olney, T., Toetenel, L., & Woodthorpe, J. (2016). Reviewing three case-studies of learning analytics interventions at the Open University UK, Proceedings of the Sixth International Conference on learning analytics & knowledge. ACM, (pp. 534-535). doi: 10.1145/2883851.2883886.

Rienties, B., & Toetenel, L. (2016). The impact of learning design on student behaviour, satisfaction and performance: a cross-institutional comparison across 151 modules. Computers in Human Behavior, 60, pp. 333-341.

Notas de autor

E-mail: r.hidalgo@open.ac.uk

E-mail: gerald.evans@open.ac.uk